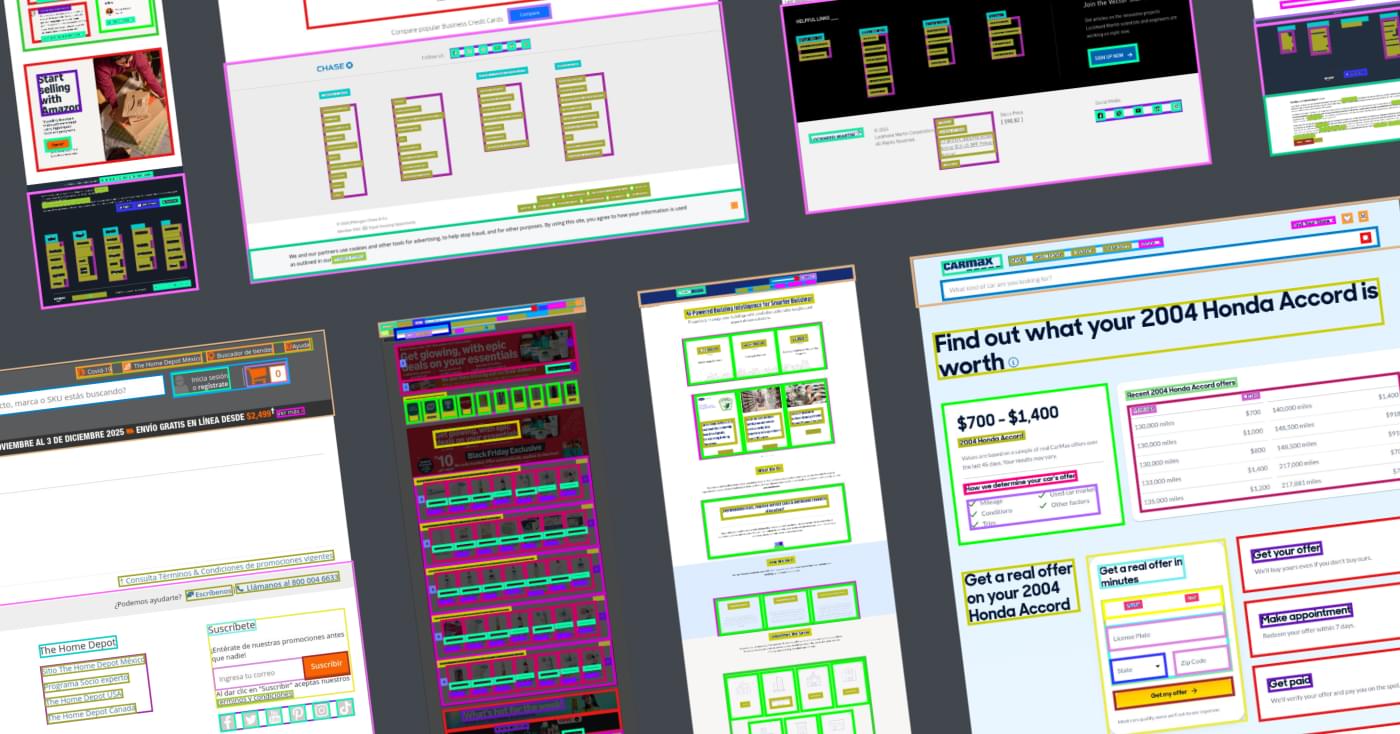

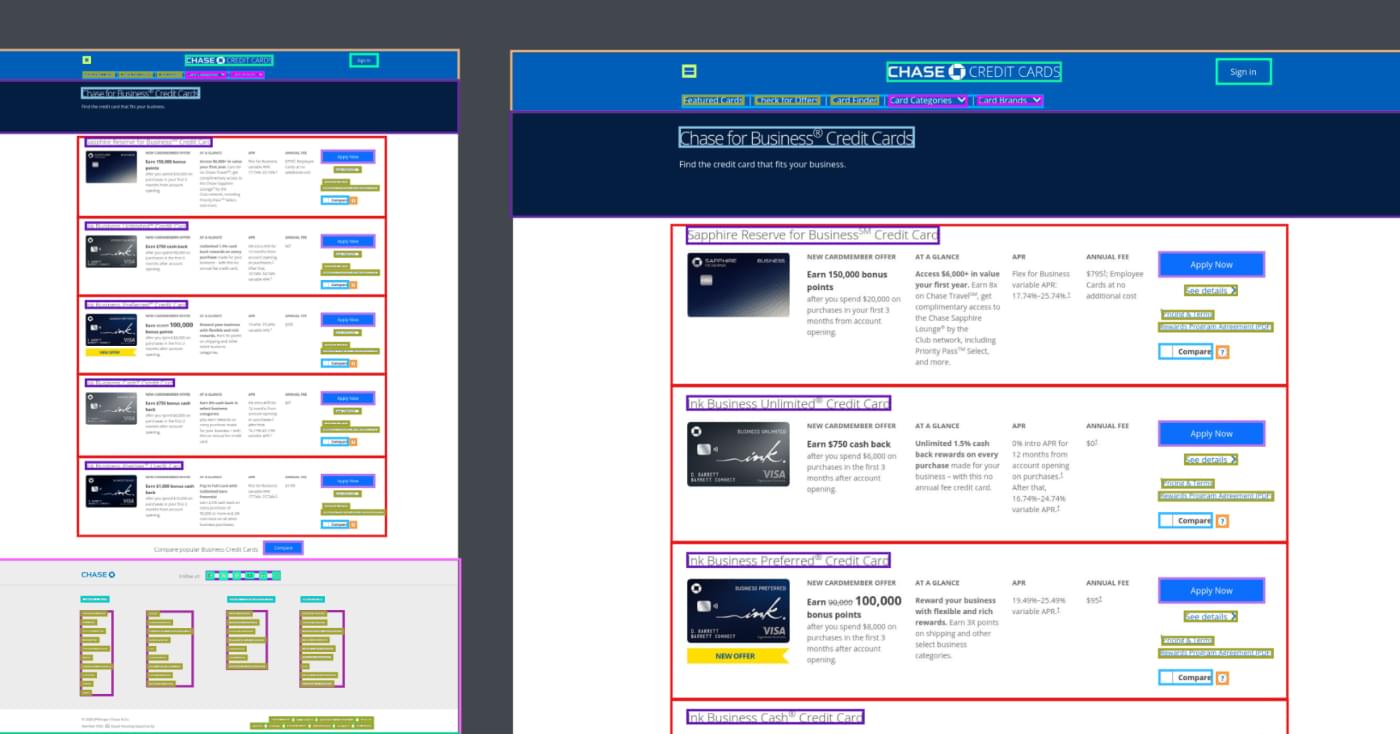

The second part of the collaboration focused on how LLM-based models analyze and validate source code in real-world development scenarios. This project addressed the practical review and validation of web interface solutions designed for users with disabilities. A key principle of the work was manual expert review: a highly specialized web accessibility expert from Keymakr validated outputs from AI assistants and coding systems to ensure the proposed code met accessibility requirements.

The expert’s role went far beyond formal syntax checks or high-level recommendations. Each request required contextual analysis, an understanding of interface logic, possible interaction scenarios, and potential corner cases.

Two primary expert workflows emerged as a result:

-

Analysis for accessible design functionality.

Here, the task was to structure requirements: distinguish between critical, desirable (nice-to-have), and optional elements, and define the correct implementation sequence. The value in these cases lies in outlining the solution's structure, as the order of actions and implementation logic is often critical for accessibility-related tasks. So, the Keymakr expert had to provide actionable recommendations for the model to learn from.

-

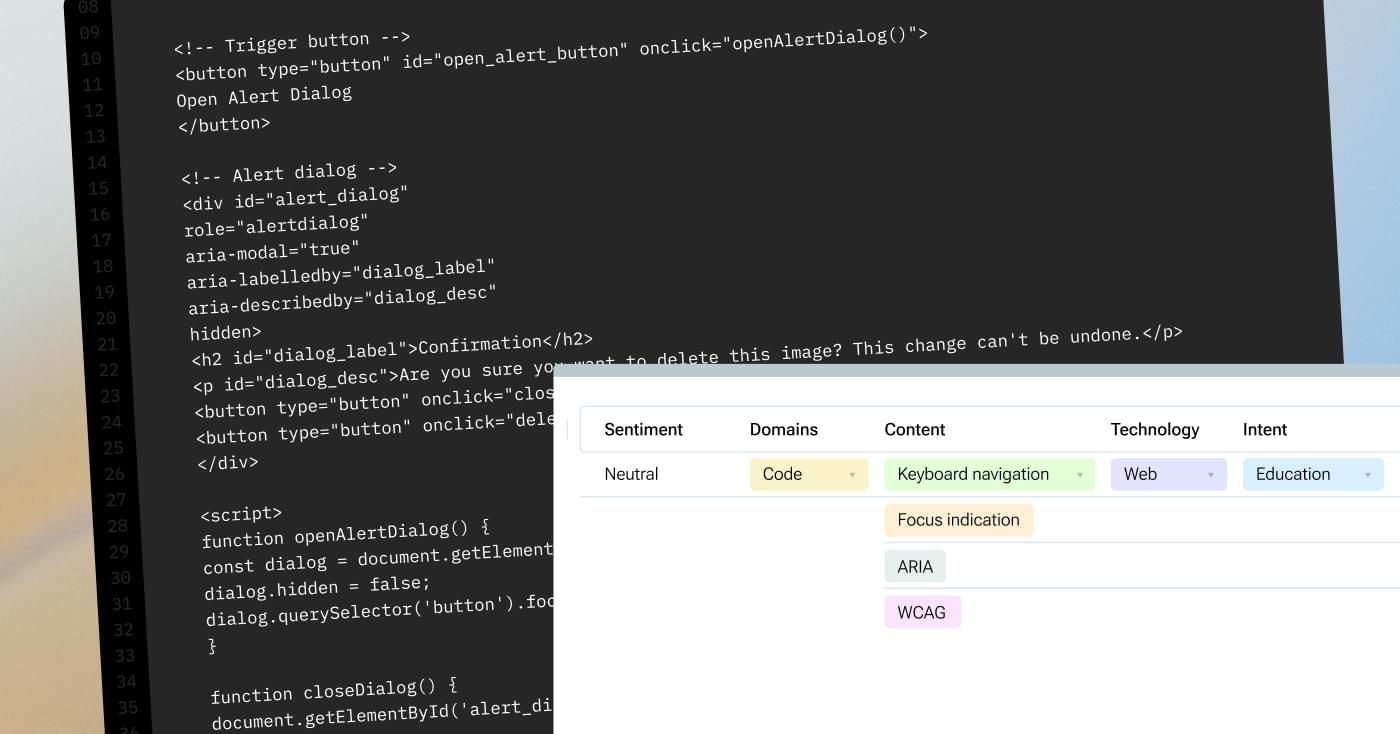

Evaluation of existing code fragments.

Detailed breakdown of which parts were implemented correctly, where accessibility standards were violated, and how those violations should be remediated. Typical requests concerned interactive elements and components with complex behavior. The analysis accounted for formal criteria, including corner cases, content type, website domain, underlying technologies, and realistic interaction scenarios.

The expert could not rely on superficial feedback such as “this is incorrect - change required.” Each response required a detailed conclusion explaining the nature of the issue, the functionality it affected, its impact, which requirements were critical, and why the solution needed to be implemented in a specific way. In such cases, the expert provided references to external standards and guidelines, such as WebAIM.

In terms of capacity, requests varied significantly. Some cases required around 20 minutes, while others took several hours, depending on task complexity, the volume of supporting materials, and the depth of corner-case analysis. The overall pool included approximately 800 unique cases, each contributing to the formation of a validated expert knowledge base.

The core value of this project lies in building a durable repository of expert knowledge. Verified, well-structured answers were used both to support development workflows and to facilitate subsequent validation of web resources, particularly government and quasi-government websites that are legally required to comply with accessibility regulations. In this way, the system served a dual purpose: supporting the creation of accessible interfaces and acting as a compliance validation mechanism aligned with a common baseline standard and country-specific regulatory requirements.