Complete Guide to LLMs for Synthetic Data

The modern development of many artificial intelligence models requires volumes of information that exceed all available supplies of high-quality content on the internet. Synthetic generation is becoming the only way to provide algorithms with the fuel they need for training. Since synthetic data does not belong to real individuals, its use allows for a complete bypass of regulatory restrictions like GDPR. This eliminates the risks of personal information leakage during development or testing.

In real-world datasets, there is often a bias toward typical cases. Synthetics allow for the artificial generation of rare scenarios, creating balanced conditions for training without bias. Models often struggle with rare or dangerous situations that are difficult to capture in reality, such as industrial accidents or rare medical conditions. A synthetic environment enables the safe creation of an unlimited number of such examples to increase system resilience.

Thus, synthetic data is transforming into a tool that accelerates innovation where access to real information is blocked due to legal, ethical, or physical barriers.

Quick Take

- Synthetics are the only way to provide AI with data when internet resources are exhausted.

- Since the data is artificial, it does not belong to real people, which removes legal risks.

- LLMs can create everything: from lifelike dialogues to structured program code.

- Mandatory filtering and validation are necessary to avoid hallucinations and errors in the model.

- The best results come from combining a real "core" of knowledge with a synthetic expansion for rare cases.

LLM Capabilities in Creating Artificial Worlds

Modern language models have made a true breakthrough in the field of creating artificial information. Previously, complex mathematical formulas were used for this purpose, which could not convey the nuances of human communication. Today, technologies allow for the creation of texts and scenarios that are almost indistinguishable from the real thing. This opens new opportunities for training innovative systems based on high-quality and safe content.

Advantages of Using Language Models

The process of generating synthetic data has become much simpler thanks to the ability of algorithms to understand context and complex instructions. Language models work ideally with texts and live dialogues because they have learned from billions of human sentences. This allows them to create variable examples that do not repeat each other and cover different speaking styles.

Another important feature is the ability to produce structured outputs. This means that the model can generate clearly organized data in the format of tables or program code. Thanks to this, developers receive ready-made data sets that can be used immediately in their work. This LLM data generation approach ensures high speed and accuracy in task execution.

Main formats of artificial content

Using language models allows for the creation of entire libraries of knowledge for any development needs. This is vital for a privacy preserving data strategy, as synthetic copies enable systems to be trained without using real secret archives. It also helps in the data augmentation process when existing databases need to be expanded with new, unique examples.

Methods of Creation and Verification of Artificial Data

There are several ways to make algorithms generate applicable content while avoiding errors that could ruin the final product. A skillful combination of different technical approaches allows for a reliable basis for training any AI systems.

Popular Information Generation Techniques

Each team chooses its path for creating data depending on the project goal and available computing resources. The simplest option is prompt-based generation, where models simply describe the desired result. More advanced methods involve self-learning or self-instruction, where the algorithm independently creates tasks and detailed answers to them based on a few examples.

Developers also frequently use these methods:

- Data augmentation to expand existing bases by creating content-similar copies.

- Controlled generation where output data is strictly limited by set rules or formats.

- Retrieval + generation or the RAG approach, where the model first searches for facts in external documents and then forms accurate answers based on them.

How to Ensure High-Quality Results

Since artificial data is created by a machine, it requires mandatory verification before use in serious projects. The most important stage is automatic validation, which allows for the instant filtering of technical defects or empty files. It is also necessary to perform deduplication to avoid repetitions that could confuse the future model during training.

Essential control tools include:

- Checking the factual accuracy of every generated statement.

- Searching for and identifying hidden bias in texts.

- A selective review of results by a human reviewer.

- Comparing the structure of artificial data with real samples to confirm its naturalness.

Potential Threats and Technology Limitations

Despite the enormous advantages, working with artificial information has its pitfalls. One of the biggest problems is hallucinations, where the model confidently generates fabricated facts that look real. Pattern repetition is also common, where the algorithm starts producing sentences with the same structure, making the dataset monotonous.

Other risks worth highlighting:

- Reinforcing existing stereotypes and biases that were in the model itself.

- The overfitting effect occurs when the system becomes too accustomed to artificial examples and loses the ability to work with the real world.

- Inconsistency with the real distribution of data leads to errors in predictions.

Model Improvement Strategy

Although modern algorithms can generate billions of lines of text, true experience and the unpredictability of the real world remain an indispensable benchmark. The correct combination of these resources allows for the creation of stable systems that work equally well with both typical and rare queries.

Balance Between Real and Artificial Information

Synthetic data should rarely replace real facts entirely, as it is only a copy of existing knowledge. Without a constant influx of live information, models risk looping on their own errors, leading to a loss of quality. A hybrid approach works best, where real data forms a reliable core and synthetics are used to expand the base and fill gaps in complex topics.

This balance is ideal for scenarios where:

- Real data is very scarce or too expensive to collect.

- User privacy needs protection by replacing personal details with fictional ones.

- It is necessary to test the system's operation in extreme conditions that have not yet occurred in the company's history.

Using Artificial Sets for Fine-tuning

Specialized fine-tuning of models becomes much more effective thanks to high-quality synthetic datasets. This allows developers to literally "program" the AI's behavior by setting the desired tone of communication or depth of knowledge in a specific field.

Synthetic sets are most helpful in these tasks:

- Instruction tuning to help the model better understand and accurately execute complex commands.

- Domain adaptation is when a general model needs to quickly learn terminology in medicine, law, or programming.

- Low-resource tasks where there are almost no ready-made texts online for certain languages or rare dialects.

- Model testing by creating complex test questions that might trip up the algorithm.

Practical Workflow for Creating a Synthetic Dataset

Creating an artificial dataset is a clearly planned engineering process. To obtain a result that truly improves the model, rather than spoiling it with hallucinations, a team must go through several important stages. Each step allows for filtering out errors and ensuring that the data matches the set goal.

Step-By-Step Development Algorithm

- Task definition. At the very beginning, it is important to clearly understand what exactly the model lacks. This could be specific medical dialogues, code writing examples, or complex legal conclusions.

- Prompt strategy development. The team creates and tests instructions for the language model. At this stage, it is decided whether it will be direct generation or a more complex approach where the model first creates a plan and then details it.

- Mass generation. Once the ideal instruction is found, a large-scale process is launched. At this stage, it is important to set up automation to obtain thousands or millions of unique examples in a short time.

- Filtering. The obtained data passes through a system of sieves. Special algorithms remove too short responses, repetitions, profanity, or texts that do not match the specified format.

- Validation. The best samples are checked for factual accuracy. This can be done using another, more powerful model or by involving experts who confirm the correctness of the content.

- Testing on the model. The final stage, where the model trained on synthetics is checked on real tasks. Only after successful tests is the dataset considered suitable for use in the main product.

The Future of Synthetic Data with LLM

The development of language models leads to data creation ceasing to be a separate project and becoming part of the constant life cycle of any system. This will allow for creating a new level of intelligence that learns faster and with higher quality.

The trends of the future include:

- Automated data pipelines. The creation of datasets will turn into a conveyor belt where systems themselves determine what knowledge they lack and automatically launch the process of generating new examples. This will allow models to update in real-time without engineer intervention.

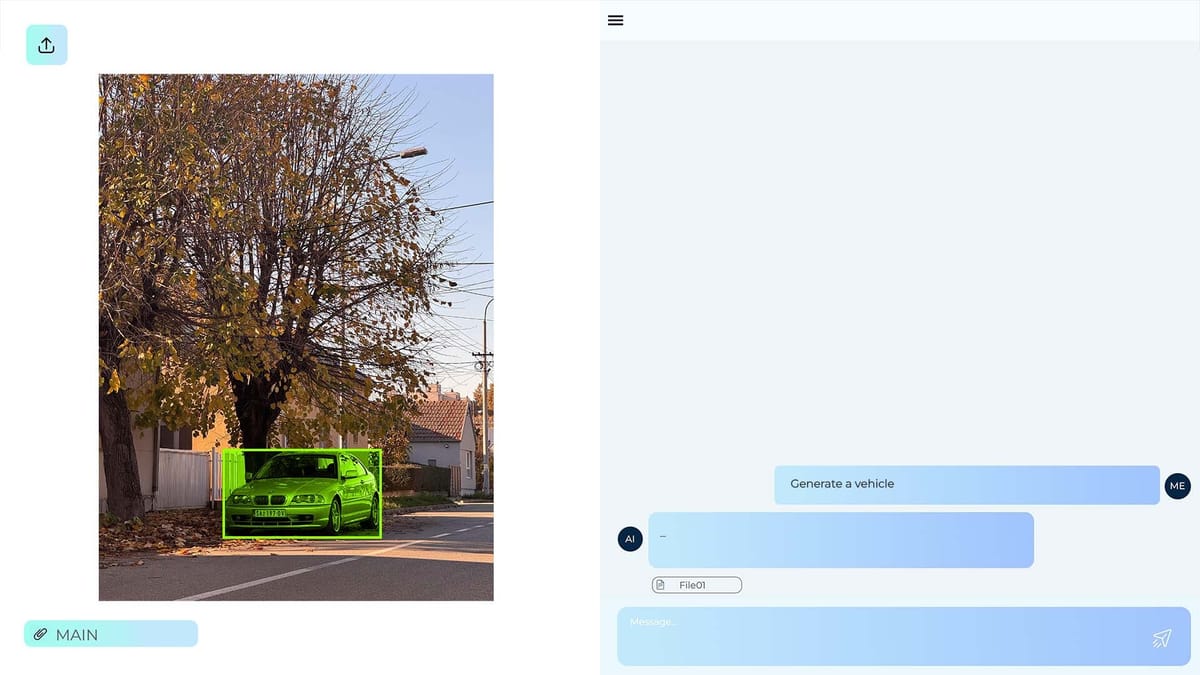

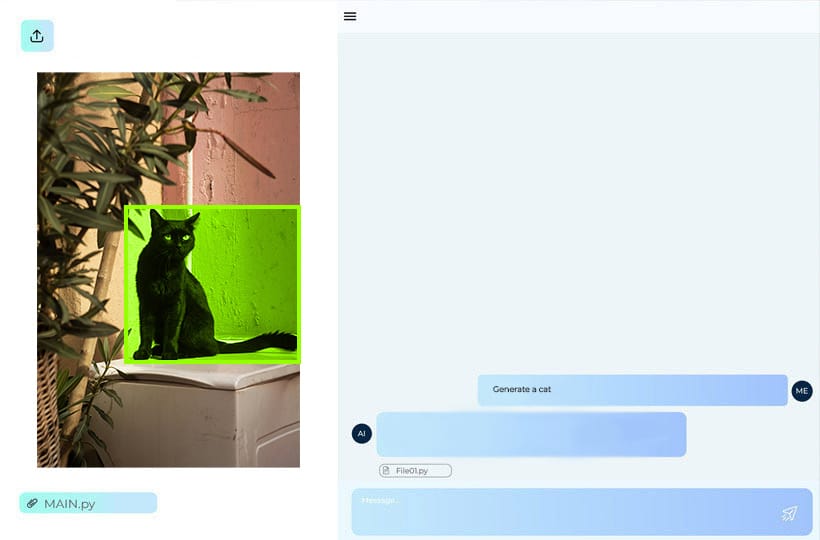

- Multimodal synthetic data. Artificial data will no longer be limited to text only. The future lies in complex sets where text, images, video, and sound are synchronized with each other. For example, a model will be able to generate a video of an industrial accident along with a text description and technical sensor logs.

- Autonomous data agents. Instead of simple algorithms, special digital agents will appear. They will "communicate" with each other, argue, and check each other's facts, creating the most objective and deep training materials.

- Self-improving datasets. Data will become dynamic. Datasets will be able to independently correct their errors based on new discoveries or user feedback. This will launch an endless cycle of improvement, where high-quality data creates better models, and those in turn generate even more perfect data.

These changes will make artificial intelligence more accessible, as companies will no longer need to own giant archives of real information to create competitive products.

FAQ

How does synthetic data help in reducing AI toxicity?

Developers create special datasets where artificial agents demonstrate correct and ethical behavior in conflict situations. Training on such examples helps the model better internalize norms of politeness and safety.

Are there special file formats for exchanging synthetic datasets?

Most often, JSON or Parquet formats are used, as they allow for efficiently storing huge volumes of structured text and metadata. This facilitates quick loading of information into cloud environments for training.

How to check the "freshness" of synthetic data?

It is important to compare the generated information with the latest news and facts through RAG systems so that the model does not learn from outdated perceptions of the world. This guarantees the relevance of future AI responses.

What is model "poisoning" by synthetics?

This is a situation where a model learns from its own errors or the errors of another AI, which leads to the accumulation of hallucinations and a loss of logic.

What is "catastrophic forgetting" when switching to synthetics?

This is the risk that a model will learn new artificial patterns but completely lose the ability to process basic real user queries. To prevent this, a portion of time-tested real examples is always added to the training set.

Does synthetic data help in drug development?

AI generates virtual chemical compounds and models their interactions, allowing scientists to avoid conducting thousands of real, unsuccessful experiments in laboratories. This accelerates the search for new treatments for complex diseases several times.

How do regulators plan to mark synthetic content in the future?

The implementation of invisible digital watermarks in metadata is being discussed so that any other system can instantly identify the artificial origin of information. This will help maintain the transparency of the digital environment and avoid manipulations.