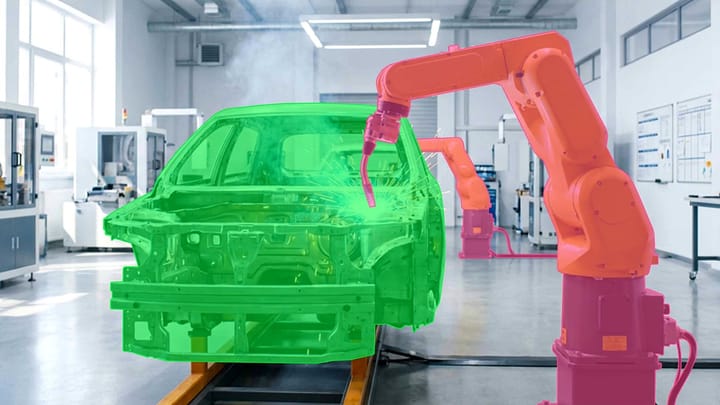

CARLA Simulator for Autonomous Driving Data Labeling

Creating reliable autonomous driving systems requires colossal amounts of labeled data. However, collecting real-world information from roads has significant limitations. Real trips are expensive due to costs for fuel, equipment, and driver labor, and the annotation process takes months. Furthermore, it is nearly impossible to safely capture critical situations on