Global AV Datasets and Regional Labeling

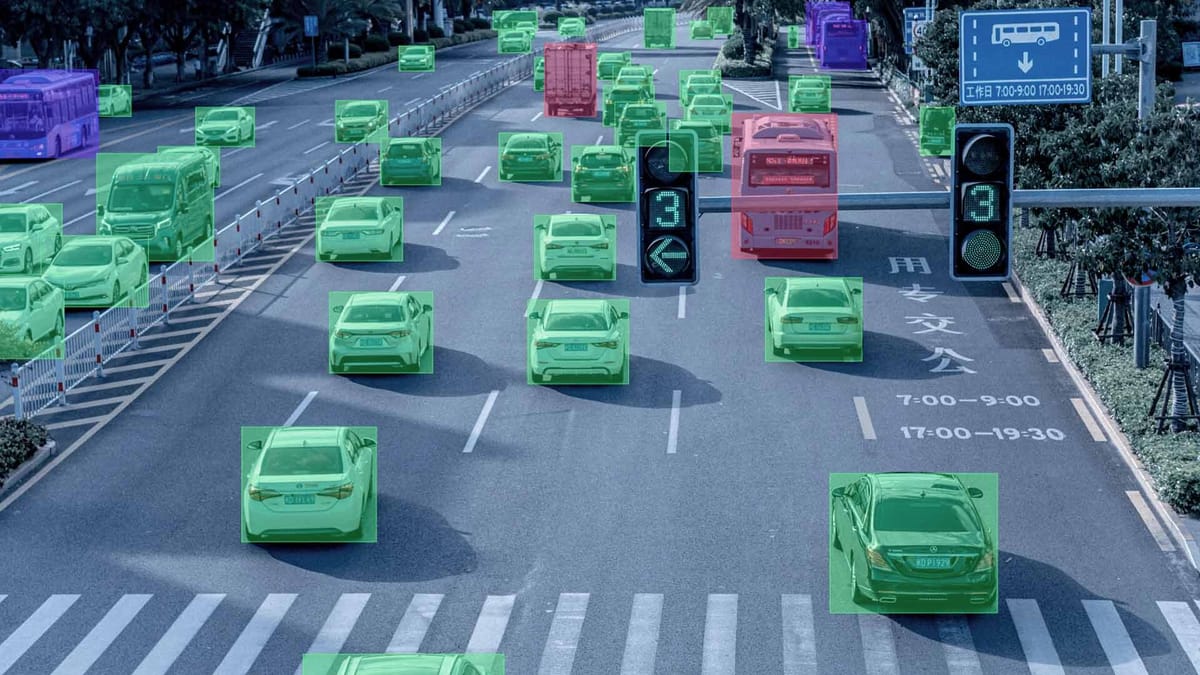

Creating a universal autonomous driving system is impossible without considering the global context, as artificial intelligence algorithms directly depend on the diversity of input data. A model that demonstrates flawless performance on the perfectly marked highways of California can become dangerous in the snowy conditions of Scandinavia or the chaotic traffic of Southeast Asian megacities. The geographical limitations of training samples create "blind spots" in the car's intelligence, where any object or maneuver atypical for the original region is perceived by the system as an error.

Global datasets allow developers to move beyond local standards and teach the system to adapt to a wide spectrum of road cultures. This applies not only to visual differences – such as the shape of road signs or the color of traffic lights – but also to fundamental features of infrastructure and social behavior. Without access to data from different parts of the world, autonomous driving systems will remain local products, unable to guarantee stability and safety beyond familiar testing grounds.

Quick Take

- Global data is essential for eliminating geographical bias in AI, which leaves models helpless in atypical conditions.

- Annotation instructions must change depending on the country.

- AI must understand local driving styles, not just formal traffic laws.

- Proper annotation of weather factors is the foundation for all-weather autonomous operations.

- Global datasets teach the model to see the essence of objects, allowing it to adapt to new cities faster without total retraining.

Specifics of Local Factors

For an autonomous car to feel confident anywhere on the planet, it must understand the unique characteristics of each region. The diversity of roads and weather conditions creates millions of variations in how the same situation can look in real life.

Infrastructure and Road Culture Features

Every country has its own unique road code, formed over decades. When developers perform geographic annotation, they find that things familiar to us may be completely absent in other parts of the world. This applies to both the appearance of the streets and the unwritten rules of driver behavior.

- Road marking. In some regions, lines on the asphalt may be yellow instead of white or entirely absent on large stretches of the road.

- Vehicles. Specific objects often appear on roads, such as rickshaws, three-wheeled trucks, or unusually shaped agricultural machinery.

- Road signs. The shape, color, and symbols on signs differ significantly, requiring the collection of separate regional data for each new market.

- Pedestrian behavior. In megacities, people may cross the road in a chaotic manner, and the model must be able to predict such local scenarios.

Due to these differences, artificial intelligence often fails when moving to another country. For example, a system might not recognize a traffic light if it is positioned horizontally above the road instead of vertically on the side, as it was accustomed to during training.

Natural Challenges

Climate is one of the most difficult barriers to stable sensor performance. Optical cameras and LiDARs see the world differently when air clarity or road surface conditions change. For safe driving, it is necessary to account for all possible international driving conditions dictated by nature.

Seasonal changes also completely transform the visual appearance of the same location. The same tree has thick foliage in summer but turns into a thin skeleton of branches in winter. If data is not collected during different seasons, the model may perceive a familiar road as a completely new place. Therefore, proper labeling of such complex cases is the foundation for creating a reliable, all-weather driving system.

Cultural Adaptation of Annotation Standards

Understanding how drivers interact in different countries allows for the creation of models that can predict the logic of those around them.

Road Priorities and Driving Style

The gap between official traffic laws and real driving styles in different countries can be colossal. Artificial intelligence must account for these features when predicting trajectories. For example, in Northern European countries, drivers strictly follow the right-of-way sequence, while in many megacities in Asia or Latin America, priority often goes to whoever takes the initiative first or sounds their horn.

Local context during annotation helps the model distinguish between "aggressive" and "normal" behavior for a specific city. If a sharp lane change is considered an exceptional event in Tokyo, it is a standard maneuver in Mumbai. Annotators must mark such actions, considering the local mentality, so that the AI does not make false decisions about emergency braking where a smooth reduction in speed would suffice.

Transforming Annotation Rules for Local Needs

When a project enters a new market, data labeling instructions undergo a full revision. It is impossible to use the same taxonomy (list of object classes) for the entire world, as the composition of objects on the streets constantly changes.

- Change in object classes. In Indian datasets, a separate class appears for auto-rickshaws, and in Australian ones, specific marking for kangaroos, whose movement trajectory is unlike that of the deer common in Europe.

- Local taxonomy. Stop signs can have different shapes and languages, so instructions must clearly describe all possible visual variations for a specific country.

- Quality criteria. Requirements for geographic annotation precision may depend on building density; on narrow Italian streets, a several-centimeter margin of error when marking curbs is critical, unlike on wide American highways.

Adapting annotation allows for a flexible system that recognizes not just a "vehicle", but understands its dimensions, typical speed, and expected behavior in that specific city. This transforms the autonomous vehicle from a visitor into a full-fledged and safe participant in local traffic.

Advantages of the Global Approach

A lack of data diversity makes a system vulnerable to the smallest changes in the environment. A global approach, conversely, hardens the AI, making it capable of rapid adaptation.

Risks of Homogeneous Datasets

When a model sees only the same types of roads and objects, it becomes accustomed to specific patterns. This leads to critical recognition errors when any visual difference confuses the system.

- Classification errors. Objects not present in the training sample may be identified as "noise" or unknown obstacles.

- Unstable behavior. AI may make chaotic decisions, such as braking sharply in front of safe objects, simply because they look unusual.

- Loss of trust. Every error by an autonomous vehicle in a new region is actively discussed in the media, which slows down mass adoption and causes user skepticism.

How Global Datasets Improve Generalization

Using data from different parts of the world allows the model to learn common features of objects while ignoring secondary visual details. This significantly improves generalization ability: the system begins to understand the very essence of a "car" or an "intersection", regardless of how they are locally designed.

By combining regional data, developers can achieve high stability without needing to collect millions of new frames for every single city. A model that has seen both the desert roads of Arizona and the narrow streets of Paris adapts much faster to the conditions of, say, London or Tokyo through fine-tuning mechanisms. This makes the technology scalable and allows autonomous vehicles to become safer with every new region added to the collective knowledge pool.

FAQ

How do annotators work with roads without markings in developing countries?

In such cases, instead of lane lines, they annotate the "drivable area" and the trajectories of other vehicles so the model can navigate based on the flow and the physical boundaries of the road.

Are there differences in annotating driver hand gestures in different regions?

Yes, this is a major challenge. In some countries, an extended hand means a request to let them in; in others, it is a gesture of thanks. Separate "social interaction" annotation classes are created for this purpose.

How do privacy rules affect the global annotation process?

Developers must blur faces and license plates before the data reaches annotators. In some countries, raw data is forbidden from being exported, so labeling occurs on local servers.

Why are Australian kangaroos considered a "nightmare" for AV developers?

Because of their unique way of moving, systems often determine the distance to an object by its contact with the ground. When a kangaroo jumps, it leaves the surface, which confuses the algorithms: the AI might think the object has suddenly moved away or disappeared.

Why is it important to annotate "low sun" in specific geographical latitudes?

In northern countries during winter, the sun sits very low on the horizon, creating extreme glare. Annotators must mark frames with "sun dazzle" to teach the model to rely on LiDAR and radar when cameras become ineffective.

How does deduplication help in global datasets?

It prevents the model from being "cluttered" with identical frames from long highways. This is especially important in global projects to ensure one region does not outweigh unique data from narrow streets.

What is "semantic mismatch" in regional signs?

This is a situation where signs have the same function but a completely different appearance. For example, a "Stop" sign is octagonal in most countries, but in Japan, it is triangular and points downward. Without regional data, the AI will simply ignore such a critical command.

How is model performance quality verified when moving to a new region?

The zero-shot testing method is used: the model is tested on data from a new country it has never seen before. This allows developers to precisely measure "geographical bias" and understand exactly how much new data needs to be added for safe operation.