Case Studies: Semantic segmantation in examples and details

Semantic segmentation is a powerful technique that has revolutionized many fields, including medical imaging, autonomous driving, and object detection. It allows computers to understand the content of an image at a pixel level, dividing it into meaningful regions and assigning labels to each of them. This technology has opened up new possibilities for many industries, enabling them to automate complex tasks, improve accuracy, and save time and resources.

In this article, we will explore the world of semantic segmentation through real-world examples and case studies. We will delve into the basics of semantic segmentation, the techniques and algorithms used, and the challenges and limitations of this technology. We will also examine three different case studies that showcase the diverse applications of semantic segmentation in medical imaging, autonomous driving, and object detection.

Lastly, we will discuss the future of semantic segmentation and its potential for further advancements and developments. Join us on this informative journey to learn more about semantic segmentation and its real-world applications.

Introduction To Semantic Segmentation

Semantic segmentation is a computer vision task that involves assigning a label to every pixel in an image. This technique can be used in various fields such as autonomous driving, object recognition, and medical imaging. Semantic segmentation aims to classify all objects in the scene and group pixels that belong to the same class. It is different from instance segmentation, which differentiates between multiple instances of the same class.

Deep learning methods have proved highly effective for semantic segmentation, particularly with remote sensing data. However, most state-of-the-art approaches rely on supervised training using hand-crafted feature sets. The training data often includes large datasets with pixel-level labels, which require significant annotation effort.

Along with the popular deep learning architectures such as U-net and FCN (Fully Convolutional Networks), researchers have also explored various approaches including hybrid networks for multi-modal data fusion, attention mechanisms for weight adjustment with respect to feature significance, and use of prior information about object shapes and sizes during pre-processing.

Semantic segmentation has gained momentum in recent years owing to its vast applications not only restricted within artificial intelligence systems but also relevant across many other domains including digital marketing and urban planning. While deep learning techniques continue to evolve more sophisticated ways of handling vast datasets or processing large volumes of images efficiently along side less supervised approach by utilizing available dataset characteristics towards semi-supervised or even unsupervised conditional clustering or sorting; considering these combined factors it is likely that innovative developments will continue over the foreseeable future thereby improving computational efficiencies across various industries making way for next generation business use cases across diverse geographies and markets alike.

Understanding The Basics Of Semantic Segmentation

Semantic Segmentation is a computer vision technique that involves labeling and segmenting every pixel in an image based on its semantic content. This technology is widely used in various fields, such as remote sensing, autonomous vehicles, and medical imaging. The traditional segmentation methods have some limitations regarding the accuracy of object boundaries and detection of small or complex objects. However, Deep Learning algorithms enable end-to-end semantic segmentation workflows that have led to significant progress in the field.

One commonly used method in Semantic Segmentation is called encoder-decoder architecture that consists of two major components: the encoder and decoder networks. The encoder network compresses the input image into a lower-dimensional feature space by successive convolutional layers. Meanwhile, the decoder network takes this feature representation as input and generates an output segmentation map with pixel-wise labels for each object class. Several variants of this architecture exist with different skip connections between corresponding layers of encoding and decoding parts.

This technique has several applications in real-world scenarios. For instance, multi-class lane semantic segmentation is a particular problem encountered while building driving assistance systems for autonomous cars or advanced driver-assistance systems (ADAS) which lead to crucial improvements on safe-driving human experiences.

Semantic Segmentation plays a vital role in many fields where accurate pixel-wise labeling is required. With better deep learning models being developed every day, there's no doubt that Semantic Segmentation will continue to be one of the most important data labeling tasks for computer vision applications.

Case Study 1: Semantic Segmentation In Medical Imaging

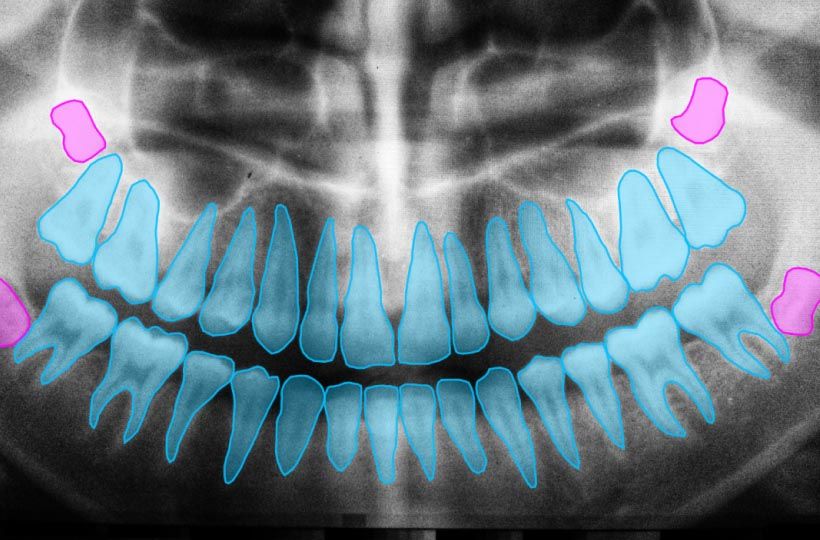

In the field of medical imaging, semantic segmentation has been employed to check the growth of organs, diseases, or abnormalities in images. Recent advances in fully convolutional networks have enabled automatic segmentation using deep neural networks. Semantic segmentation has revolutionized the field by making it easier to extract relevant information from complex medical images.

Multi-dimensional statistical features are used for extraction and fusion in medical image segmentation. These features can successfully identify objects and areas of interest and perform accurate automated measurements. The use of these techniques allows faster diagnosis and treatment planning, which ultimately leads to better patient outcomes.

One practical application of semantic segmentation is identifying tumors on MRI scans. By segmenting suspicious regions from healthy brain tissues, doctors can better analyze and diagnose conditions such as gliomas or meningiomas with greater accuracy. Furthermore, semantic segmentation has found applications well beyond MRI scans in a range of medical procedures including endoscopy, radiography among others.

Semantic Segmentation is incredibly important in medical imaging as it improves diagnostic speed and accuracy. It helps doctors identify abnormal areas more precisely and assist in developing targeted treatment options for patients with certain conditions based on the semantic content extracted from their medical images datasets all within an improved timescale due to this automation technique through machine learning models like fully convolutional neural nets

Case Study 2: Semantic Segmentation In Autonomous Driving

Semantic segmentation is a vital perception task in autonomous driving. It involves identifying objects and their boundaries within an image, which helps the vehicle to understand its surroundings. LiDAR and camera are two modalities used for 3D semantic segmentation in autonomous driving. Different modalities are required for self-driving vehicles to perceive their environment accurately.

Several deep learning architectures like convolutional neural networks and autoencoders have been used to develop models for semantic segmentation in autonomous vehicles. Cross entropy loss-based deep networks have achieved progress with mean Intersection-over-Union.

Research shows that most studies on semantic segmentation have solely focused on enhancing accuracy, with less attention paid to computationally efficient solutions. Therefore, there is a need to evaluate various models using different modalities available for 3D semantic segmentation in autonomous driving.

Adversarial attacks against LiDAR-based semantic segmentation can easily be executed by hackers who inject adversarial noise into the model's input data and cause it to misclassify objects or stop working altogether. Therefore, it's essential to apply appropriate defenses against such attacks when developing these models for use in autonomous driving systems.

Case Study 3: Semantic Segmentation In Object Detection

Semantic segmentation is a crucial task in computer vision that allows machines to understand and analyze images and videos at the pixel level. State-of-the-art methods use multi-task learning for depth estimation and semantic segmentation, which has shown remarkable improvements in perceptual understanding. Convolutional Neural Networks (CNNs) and Vision Transformers are popular architecture models used for semantic segmentation.

Joint object detection and semantic segmentation is necessary for applications such as autonomous driving, image editing, and robotics. The main challenge of combining these tasks is associating semantic segmentation with object instance detection to accurately locate objects within an image. Networks for semantic segmentation typically consist of an encoder for feature extraction and a decoder for dense pixel classification.

Training CNNs for semantic segmentation requires high-quality data, but rare objects can affect prediction stability and accuracy. To address this problem, researchers have proposed training strategies based on generative adversarial networks (GANs), which allow the network to generate synthetic data similar to real-world scenarios.

Semantic Segmentation combined with Object Detection has numerous applications across various fields today. CNNs and Vision transformers are popular architecture models used in this approach that combine deep learning methodologies & multitask learning regimes that provide excellent results with reliable accuracy rates even in the face of rare object prediction challenges causing distorted results from other CNN architectures not fit enough to handle missing-data exceptions commonly found in segmented datasets.

Techniques And Algorithms Used In Semantic Segmentation

Semantic segmentation is a classification task that extracts meaningful information from images or input frames in videos or recordings. This process involves three steps: classifying, localizing, and segmentation. There are two main types of segmentation techniques: instance segmentation and semantic segmentation. Semantic segmentation refers to the labeling of every pixel of an image with a class label from a predetermined set of classes.

Many approaches have been developed for semantic segmentation, including traditional methods using threshold-based techniques and deep learning-based techniques. Traditional techniques involve extracting features from the image and subsequently classifying the pixels. However, deep learning-based approaches outperform traditional methods by exploiting vast amounts of labeled data to learn complex models that behave differently depending on different features within an image.

Amazon's SageMaker semantic segmentation algorithm provides a highly accurate automated solution for this task where pixel-level labels can be predictably generated in real-time through machine learning processing in scalable cloud computing environments such as Amazon Web Services (AWS). The advantage of this technique is that it requires minimal effort compared to traditional methods while providing highly accurate results.

Semantic segmentation is essential for computer vision tasks such as object recognition, autonomous driving cars, medical imaging diagnostics like CT scans and MRI readings among others. Techniques used in semantic segmentation can be both traditional (threshold-based) ones or more advanced deep learning algorithms currently at hand like those offered by Amazon's SageMaker platform providing affordable scalability solutions with high accuracy outcomes irrespective of large complex datasets across different domains like retail on mobile application platforms serving real time fashion lookbook building applications that cater virtual outfits styled over user photographs or health sciences performing ultrasound anomaly detection through automating liver picking anomalies for classified foregrounds!

Challenges And Limitations Of Semantic Segmentation

Semantic segmentation is a powerful technique in image analysis with applications such as autonomous driving, medical image analysis, and land coverage analysis. However, this technique presents challenges and limitations in certain domains. For instance, analyzing corals poses difficulties due to the complex morphology of these lifeforms. Traditional computer vision methods have limitations in identifying multiple types of defects and obtaining pixel-level segmentation.

Deep learning has proven effective in processing remote sensing images thanks to its ability to automatically extract relevant features from large amounts of data. Several deep learning-based semantic segmentation methods have been proposed that perform well even in the presence of fine structures. However, deep learning techniques are prone to inherent class imbalance issues that can degrade performance.

Architecture models like CNNs and ViTs provide effective results on semantic segmentation tasks for various tasks including medical image analysis and autonomous driving. In recent years, there has been a growing interest in segmenting low-channel roadside 3D LiDAR data. A novel slice-based method was introduced for roadside 3D LiDAR semantic segmentation that effectively overcomes some limitations by using information captured across different heights.

Despite the challenges presented by certain domains, Semantic Segmentation remains an important tool for extracting meaningful insights from image data due to its versatility across many fields ranging from geology, agriculture research or medicine.

Future Applications And Developments In Semantic Segmentation

Multi-modal data fusion is paving the way for new developments in semantic segmentation. This research direction has shown great promise in enhancing segmentation performance by harnessing the complementary strengths of different modalities. Data from sources such as LiDAR, RGB cameras, and thermal cameras can be combined to create more comprehensive and fine-grained annotations.

Another area of development for semantic segmentation is smart transportation. As autonomous vehicles become more common, they require accurate and efficient perception systems to interpret their surroundings. Semantic segmentation plays a critical role in this process by enabling machines to comprehend and analyze visual data.

Despite its importance, developing effective models for semantic segmentation remains a challenge. Traditional encoder-decoder designs have been widely used but often struggle with handling complex or overlapping objects. Researchers are exploring alternative approaches such as adapting transformer networks or incorporating attention mechanisms.

Advances in multi-modal data fusion, smart transportation technology, and model architecture will continue to shape developments in semantic segmentation. These solutions are crucial not only for improving accuracy but also for achieving real-world applications that can benefit society at large.