Overcoming Bias in Data Annotation for Autonomous Vehicles

The push for autonomous vehicles has led to enormous amounts of innovation in the field of computer vision based AI. Driverless cars are already a feature on some roads and have the potential to revolutionise travel for all of us.

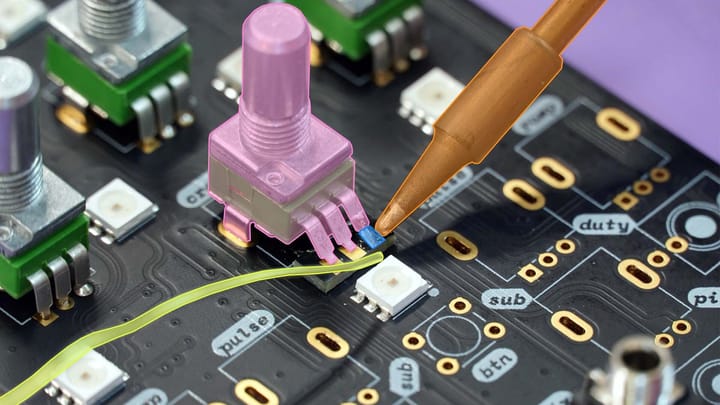

In order to continue this pace of development AI innovators need access to high quality, affordable image and video training data. Professional annotation services, like Keymakr, are stepping in to meet this need, however, challenges still remain.

Bias in the data used to train autonomous vehicle models is one of these significant challenges. This blog will identify three areas impacted by biased data and suggest ways in which image and video annotation services can help to overcome these problems.

Diverse Traffic Rules and Signage

The rules of the road and traffic conditions vary tremendously from country to country. Traffic signs can look entirely different or mean something different and can, of course, be written languages other than english.

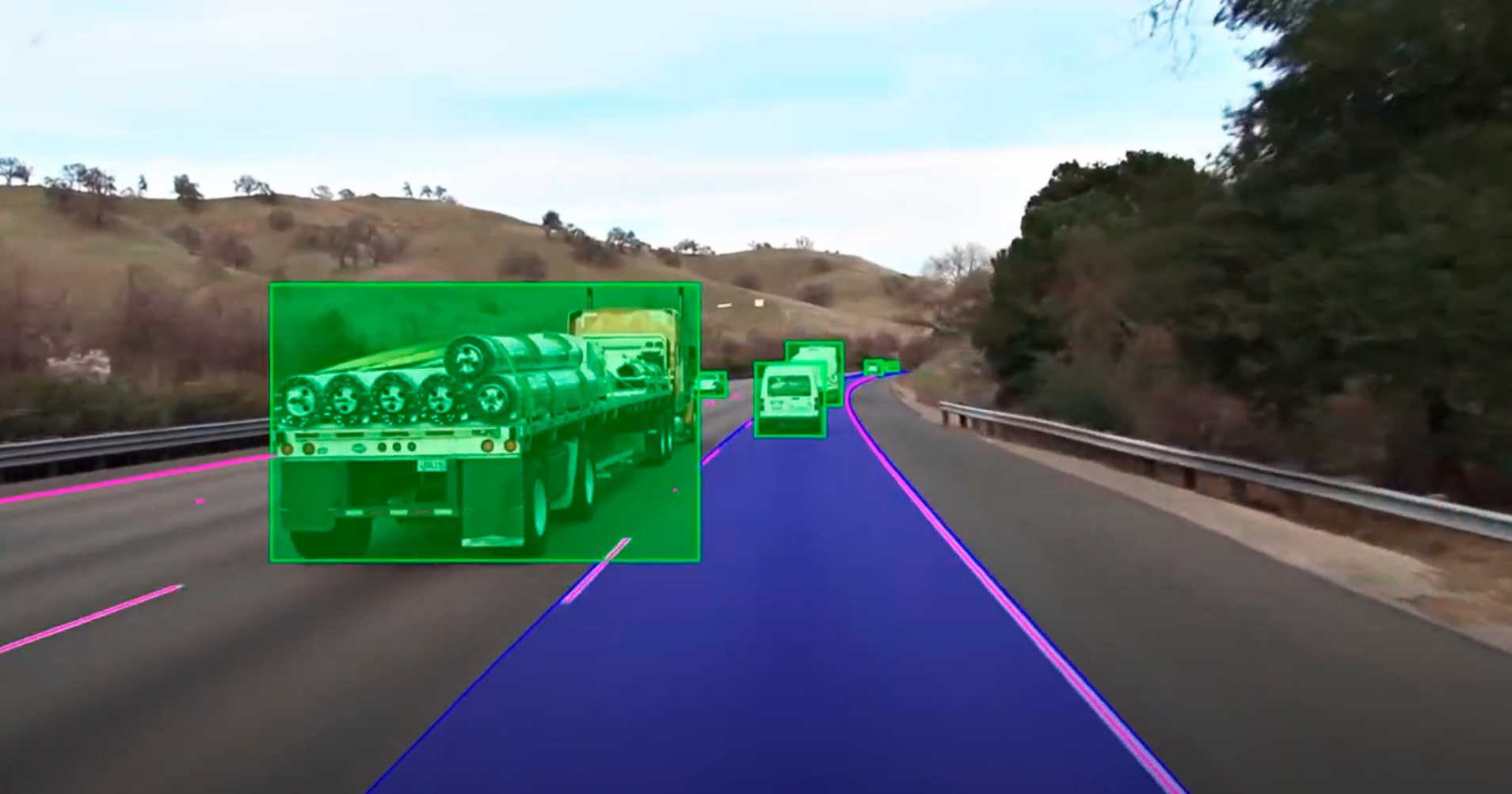

Road markings also vary significantly between regions. Autonomous vehicles often rely on recognising lane markings and dividing hashes to locate themselves on a busy highway. A change in these markings could lead to dangerous situations for passengers and other road users.

Some countries mandate driving on the left hand side of the road and this again could cause problems for an AI trained exclusively with image and video data created in the United States.

Image and video data for machine learning that is biased towards the regulations and signage of one particular country will disadvantage autonomous vehicles in other environments.

Smart annotation can circumvent this issue by providing developers with labelled images and video from any number of regions. Accurately annotating road signs and road markings in diverse contexts leads to more adaptable computer vision AI models.

Navigating through Challenging Conditions

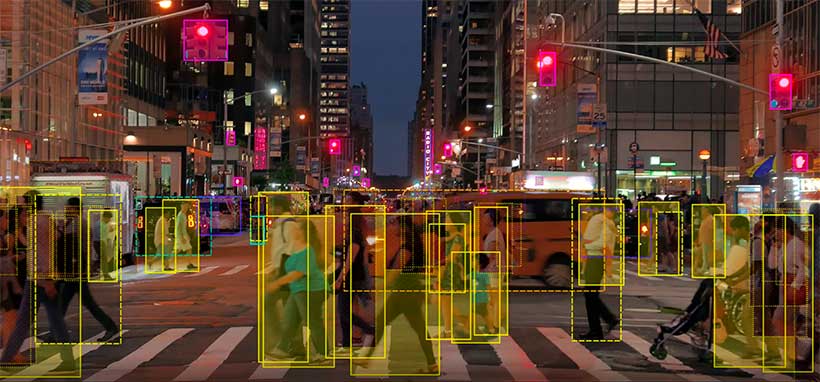

Autonomous cars are extremely reliable when operating in daytime in good weather conditions, however, reliability decreases markedly in low light and low visibility. There is a danger that an autonomous vehicle might transfer control back to a driver with little warning as the sun begins to set.

Similarly poor weather conditions can impact AI models that are used to seeing objects clearly and unobstructed by rain or fog. Dynamic weather is a feature of driving and something that future generations of self-driving vehicles must be able to deal with.

Video annotation at night | Keymakr

Annotation providers, like Keymakr, can play a part in troubleshooting these persistent problems by creating and labeling bespoke datasets. Experienced teams of annotators can take on the burden of annotating images and video that accurately reflect nighttime driving and low visibility weather.

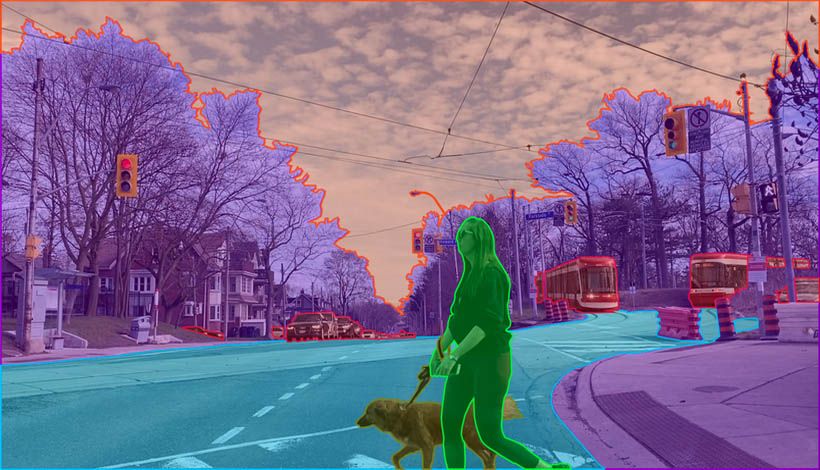

Adapting to Cultural Contexts

The vast majority of autonomous car development has so far taken place in the United States and California in particular. Taking autonomous vehicles out of this particular cultural context could lead to misunderstandings and errors.

Different countries may have roads maintained to different levels. Road markings, for example, might not be present or could be faded to the point of unrecognizability.

Driving etiquette can vary between cultures, with drivers in some countries being more or less willing to follow rules or obey speed limits. Autonomous cars also need to recognise pedestrians in diverse cultures, pedestrians who may look and dress differently to those most commonly featured in training imagery.

Again the solution to these complex issues can often be found in a good collaborative relationship between AI projects and annotation service providers. Developers can build up the comprehensive and diverse training materials that are essential for autonomous vehicle design by communicating their needs with responsive annotation teams.

Keymakr provides professional data annotation for autonomous vehicles, utilizing experienced in-house annotation teams and proprietary technology. Contact a team member to book your personalized demo today.