How Annotation Tools can Improve Quality Control

Quality image and video data is at the heart of any successful computer vision based AI project. Precisely annotated training datasets invariably lead to models which are capable of high-level performance in a chaotic and changing world. The inverse is also true, datasets strewn with errors and inaccuracies can lead to AI systems failing when they are needed most.

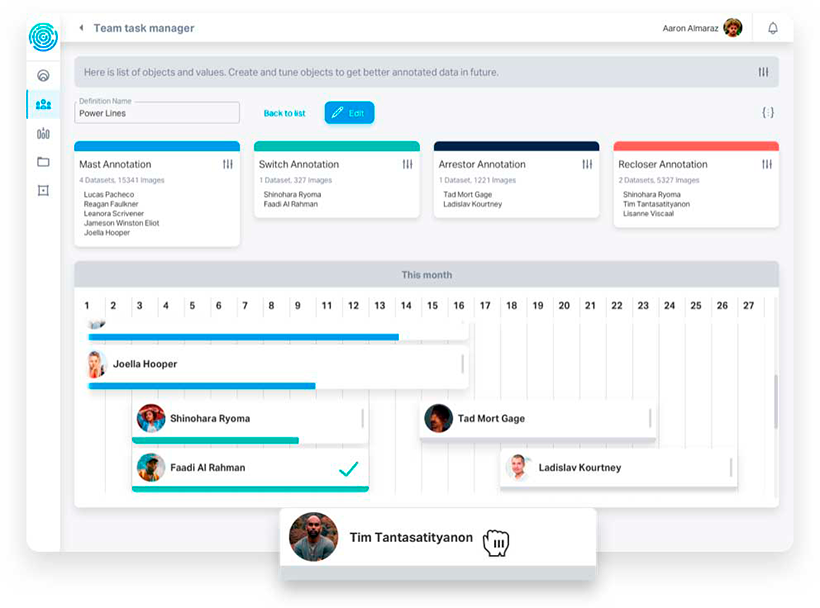

Quality control, therefore, is an essential element of any image and video labeling process. But maintaining consistent quality control procedures throughout a labeling operation can be challenging, even for larger companies. Distributed annotation workforces, using a variety of annotation tools, can be hard to manage and assess. Increasingly companies are turning to commercially produced annotation tools, like Keylabs, that feature in-built quality control features.

This blog will address some of the key quality control issues facing companies engaged in image and video annotation, and discuss how smart annotation tools are helping to overcome labeling challenges.

Quality Control: Key Issues for Image and Video Annotation

Image and video annotation can be laborious and time consuming work, it requires attention to detail from every team member over extended periods of time. Managing large teams, often crowdsourced or working remotely, without adequate quality control systems in place, can lead to significant problems in finished datasets:

- Management and quality control is difficult with a distributed team. Managers may have to communicate the specific demands of a project across different time zones and cultural contexts. This can lead to issues with quality down the line.

- Remote workers may not be familiar with different annotation tools. This can mean that progress is slower as more training is required.

- Troubleshooting can be extremely time consuming as issues have to be identified and rectified across a disparate workforce.

- Remote workers may not be devoting their full time to a project. This can impact the quality of the labels being produced and could make scaling up data needs a challenge.

Inage and video annotation platform | Keylabs

Smart Annotation Tools Ensure Quality Annotation

Quality control can prove to be the achilles heel for annotation processes. However, it is now possible for managers to take control of image and video data quality by taking advantage of smart annotation tool features. Keylabs empowers quality control by applying the following procedures to labeled images and video:

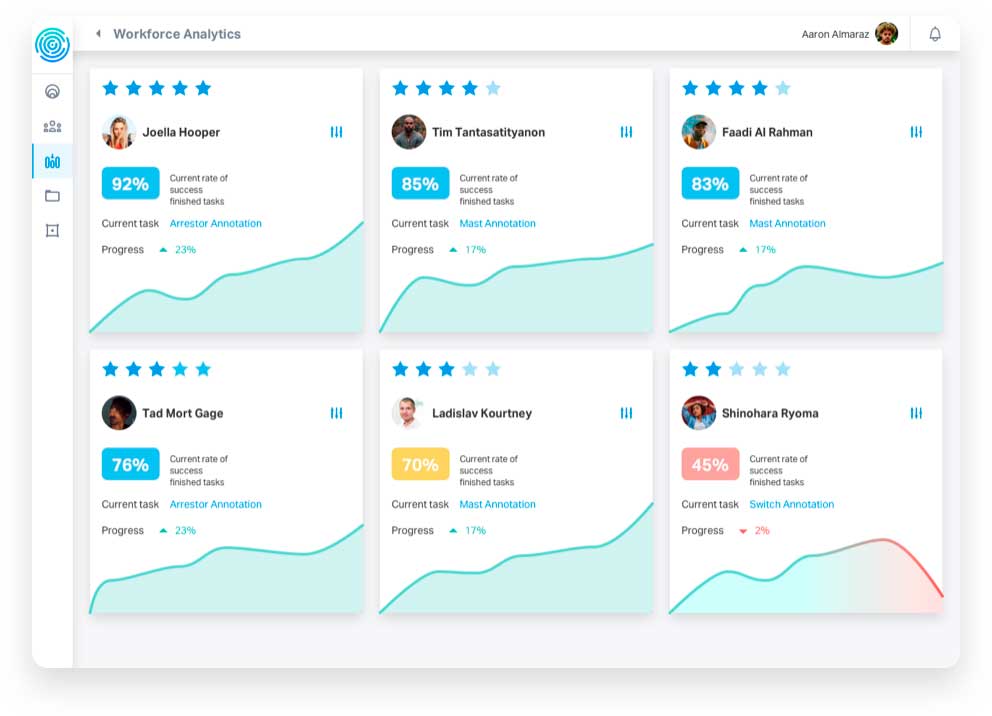

- Review: Annotations are reviewed four times in order to confirm accuracy. Two annotators label a given object, a supervisor then checks the quality of their work. Finally an auto-quality algorithm assesses the overall precision of the dataset.

- Grade: The results of the four level review are used as the basis for grading annotations. This metric for labeling accuracy allows managers to perceive the performance of an annotator in a real-time, with just a few clicks.

- Flag: Errors that have been caught by the integrated tool systems are flagged for managers and annotators. This allows for instant troubleshooting. Managers communicate with annotators through the tool, providing them with guidance or training, and ensuring that further mistakes will not occur.

- Assign: Using the detailed analytics produced by the quality control process, Keylabs will then assign work to annotators based on the overall precision of their work. This delegation tool creates a virtuous circle where annotators are rewarded for accurate work, and provides managers with an opportunity to train less well performing team members.

Keylabs is an annotation tool designed and built by image and video annotation experts. This state-of-the-art platform has been developed with quality control at its heart. Bespoke labeling features speed up dataset creation whilst user friendly interfaces facilitate good communication and easy troubleshooting.