Computer Vision Applications for the Visually Impaired

Computer vision technology has the potential to increase accessibility for the visually impaired. Visually impaired individuals face a range of barriers navigating through real and virtual spaces. Online it can be difficult for the visually impaired to participate in social media and news conversations due to the extensive use of un-captioned images.

Real world environments are also difficult for visually impaired people to negotiate, with many public spaces still evidencing a lack of concern for accessibility. A number of AI companies are developing computer vision models that can allow the visually impaired to enjoy unrestricted access to activities that the rest of us take for granted.

This blog will look at two emerging use cases for AI in this area. Maximising these efforts is in part dependent on annotated image and video training data. We will examine how annotation providers, like Keymakr, can support this exciting technology with affordable, high quality training datasets.

Digital accessibility

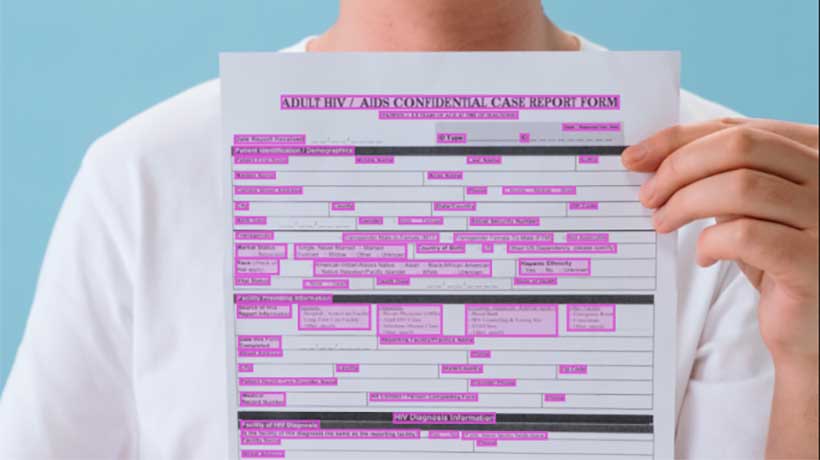

Alt text is the term used for words attached to digital images that describe the nature or content of the image. Alt text has many applications but it is essential for the visually impaired, as it allows for the easy identification of digital images. However, many images online do not have alt text attached to them, making it difficult for visually impaired people to participate in many online conversations.

I may be able to bridge this gap by making object, text, and picture recognition available to users. Microsoft’s Seeing AI is one example of these AI powered apps. This technology is able to read and scan text, identify common items, describe images or scenes, and identify people. By converting images and text into spoken words, apps like this can open up a range of digital communication options for the partially sighted, whilst making day to day tasks easier as well.

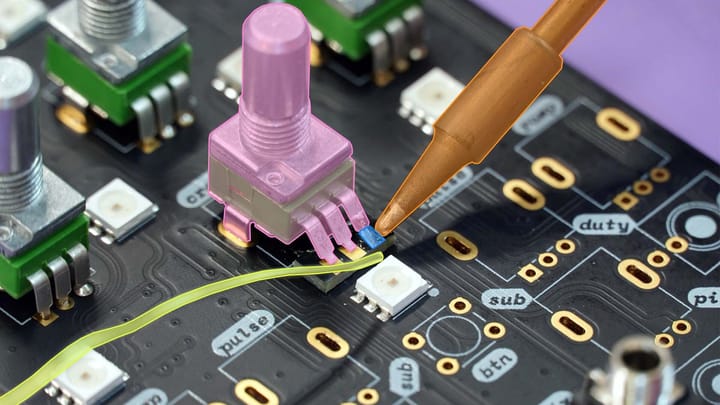

This exciting progress must be backed up with effective, annotated training data. Whilst recognition models may perform well with defined image sets, translating that performance into a reliable, everyday experience for the average user is a challenge. Image annotation providers are often best placed to provide AI innovators with varied image datasets that reflect the true complexity of the real world. As experts in data collection and creation, experienced annotation providers can ensure that training images fit with the specific requirements of any AI project.

Navigating complex environments

Negotiating busy, complicated, and at times dangerous public spaces can be a significant challenge for the visually impaired. In order to safely navigate these spaces visually impaired people need audible information informing them of risks and obstacles. AI powered virtual guides may provide part of the solution.

Machine learning models have the capacity to identify objects and alert users when they are approaching potentially hazardous situations, such as stop signs or construction work. Bluetooth connected earphones can provide users with warnings or even directly answer questions, letting them know where they are or what a particular object is.

This technology promises to radically open up public spaces for the visually impaired. However, due to safety implications it may be some time before AI guides are available for all potential users. The key to ensuring the success of this technology is high quality training data.

Mistakes at the annotation stage will inhibit the performance of these systems, and make them less safe. Professional annotation services can reliably provide innovators with error free datasets, because they employ robust quality control processes. Keymakr uses four levels of human and machine verification to ensure that annotation mistakes do not make it through to training datasets.

Collaboration between experienced annotation professionals and developers can streamline AI projects for the visually impaired. Keymakr’s experienced teams and proprietary annotation platform support innovative machine learning projects.