Best Practices for Image Annotation Projects

Image annotation is a crucial step in the training of machine learning models. Your model cannot correctly identify objects in images or videos without accurate annotations.

Best practices can help ensure your image annotation projects go smoothly and efficiently. The best practices below will help you build machine learning models that are both reliable and diverse.

Top 8 best practices for image annotation projects

Image annotation is a crucial step in the training of machine learning models. Your model cannot correctly identify objects in images or videos without accurate annotations. The best practices below will help.

Image annotation | Keymakr

1. Have a clear labeling strategy

Without a plan, you may waste time on tedious tasks or miss labeling opportunities that could improve your model's performance. A good labeling strategy depends on your labeling goals, the available data, and the types of labels you want to assign.

When labeling your data, it's essential to ensure that image annotation projects are as clear and consistent as possible. Use the same format and terminology for all labels. For example, if you refer to a location as "city" in one document, don't use another word like "town" in another.

Be consistent about capitalization and punctuation. For example, is it "New York" or "New York City?" Is it "state legislature" or "State Legislature?" Decide which convention works best for your documents and stick with it throughout the labeling process.

You might consider using a semi-supervised approach if you can't get clear labels for all the classes. Use some labeled data and some unlabeled data in your analysis.

2. Use quality data

The quality of your annotations will directly impact the model's performance, so it's crucial to invest in high-quality training datasets. For example, if you're trying to label a set of images, make sure it represents all the different variations in what you're trying to label.

Create training data that cover all possible use cases. For example, use high-quality images from various angles, distances, and lighting conditions. These factors help train models that are more robust and accurate. In addition, it is vital to train annotators with a high level of expertise and knowledge in their respective fields.

3. Measure data accuracy, reliability, and diversity

To determine the accuracy and reliability of your machine learning model, you need to measure how well it performs across various tasks. Create a test dataset with positive and negative examples. You then use your annotation tool to annotate these examples and compare how often it gets them right.

For example, if you were trying to measure the accuracy of a face recognition algorithm, you would create images containing faces as positives and images without negatives. Then, you would run those same labeled images through a facial detection algorithm to see how many times each got correct versus incorrect.

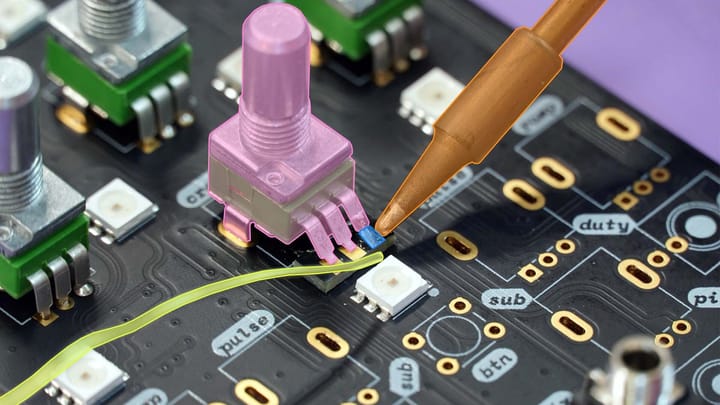

4. Choose your annotation tool wisely

You want easy-to-use tools that allow collaboration and integrate seamlessly into your existing workflows. Using a hammer to drive a screw or a wrench to hammer a nail would be silly. Choosing an annotation tool that aligns with your goals and workflow is vital.

For example, use a bounding box annotation if you have a large data volume and need fast labeling. Use a segmentation tool if you have specific areas (such as eyes, nose, and mouth) that are important to label accurately.

5. Select a suitable annotation platform for your data

There are many different data annotation platforms for image annotation projects. Each one has its features and limitations. Consider your preferences, goals, and current workflows before choosing a tool. The right platform will help you annotate more quickly and efficiently.

You may not be familiar with the annotation tool's features, so learning how it works is essential before labeling data. Some platforms offer training and tutorials to help you become familiar with their features. Other annotation tools may require additional training before you can begin labeling.

Try using a data annotation platform that has machine learning features. Machine learning tools can learn from previous annotations and apply what they've learned to new ones.

6. Leverage active-learning techniques to accelerate quality assurance

Active learning involves a machine learning technique that helps you identify the best examples to annotate. It's a more efficient way of annotating data, and you can use it for annotation tasks like image classification, object detection, and segmentation.

Active learning techniques use machine learning to identify the best examples for annotation. It can help you reduce the number of manual annotations needed to train your model. It also reduces the human effort required to train a data set.

It helps you progressively improve your models by annotating a subset of data likely to contain the most valuable information. You can use active learning and other machine learning techniques, such as deep learning and reinforcement learning.

7. Don't forget about privacy and security issues

It's important to consider privacy and security issues when developing a model. Think about how you will store and use the data and if it's personally identifiable information. You must ensure that your model doesn't violate any laws or regulations.

Your model needs to protect sensitive data, and you need to ensure the model is secure. You can use various techniques, such as encryption and obfuscation, to ensure that the data isn't exposed or compromised.

8. Test everything

Once you have an annotation tool picked out and trained models built, it's time to test them on real-world examples. The best way to ensure quality annotations is to create a vigorous review process. At the very least, your reviewers should be able to check for the following:

- Accuracy: Is the annotation correct?

- Consistency: Are there any inconsistencies in how annotations apply across different images or videos?

- Labels: Label objects with classes and subclasses using consistent terminology across all images.

- Consistency with ground truth: Do multiple annotators agree on what's in an image?

To do this, you can have reviewers look at a subset of images and compare their annotations with the ground truth. This review will give you an idea of how accurate your annotations are and whether they need to be improved.

Conclusion

Image annotation projects are an essential step in creating a machine-learning model. It allows you to train your model on the data you want it to learn from and ensure it's working as expected by testing its accuracy. The process is time-consuming, but it's essential to training AI models. Using human annotators, you can ensure your data sets are accurate and consistent.