NLP Data Annotation Strategies

Data annotation plays a crucial role in the development and evaluation of natural language processing (NLP) models. By labeling text data with relevant information, NLP models can better learn and perform specific tasks. This article explores effective strategies for data annotation for NLP, including techniques for data labeling, machine learning annotation, and text classification annotation.

Key Takeaways:

- Data annotation is a critical step in building and evaluating NLP models.

- Labeling text data with relevant information helps NLP models learn and perform tasks.

- Effective data annotation strategies include data labeling, machine learning annotation, and text classification annotation.

Choose the Right Annotation Scheme

Creating an effective annotation scheme is a crucial step in labeling data for natural language processing (NLP) tasks and models. The annotation scheme determines how the data will be labeled according to the specific task at hand.

The annotation scheme should be clear, consistent, and comprehensive to ensure accurate and reliable annotations. It is essential to choose an annotation scheme that aligns with the requirements of your NLP project.

There are existing annotation schemes available, such as those provided by the Linguistic Data Consortium and the Universal Dependencies project. These schemes have been developed and refined over time by experts in the field to cover a wide range of linguistic phenomena and tasks.

Alternatively, you can create a custom annotation scheme tailored to your specific needs. This approach allows you to define the annotation types, guidelines, and standards that align with your project goals.

When designing an annotation scheme, consider the following:

- Define the specific task to be annotated

- Select the applicable annotation types

- Provide clear and detailed guidelines for annotators to follow

- Measure inter-annotator agreement to ensure consistency

- Maintain a record of iterations and updates to the annotation scheme

Choosing the right annotation scheme is essential for accurate and meaningful data labeling. By following established schemes or creating custom ones, you can ensure the consistency and quality of your annotated data, laying a strong foundation for building high-performing NLP models.

Use the Appropriate Annotation Tools

Choosing the right annotation tools is essential for efficient annotation. When it comes to data annotation, there are several options available that can streamline the process and improve productivity. Let's explore some of the popular annotation tools in the market:

Keylabs

Keylabs is a versatile annotation platform developed by Keymakr that supports various annotation tasks, including all formats of image, video and Lidar. It provides a web-based interface with a rich set of annotation types and customization options. Keylabs also offers automation features like pre-labeling and data conversion, which can significantly speed up the annotation process while maintaining quality.

When choosing an annotation tool, it is crucial to consider factors such as compatibility with your workflow, budget, and preferences. Additionally, ensure that the selected tool supports the annotation scheme and data format you are working with. Automation features can also save time and reduce manual errors in the annotation process.

Train and Monitor the Annotators

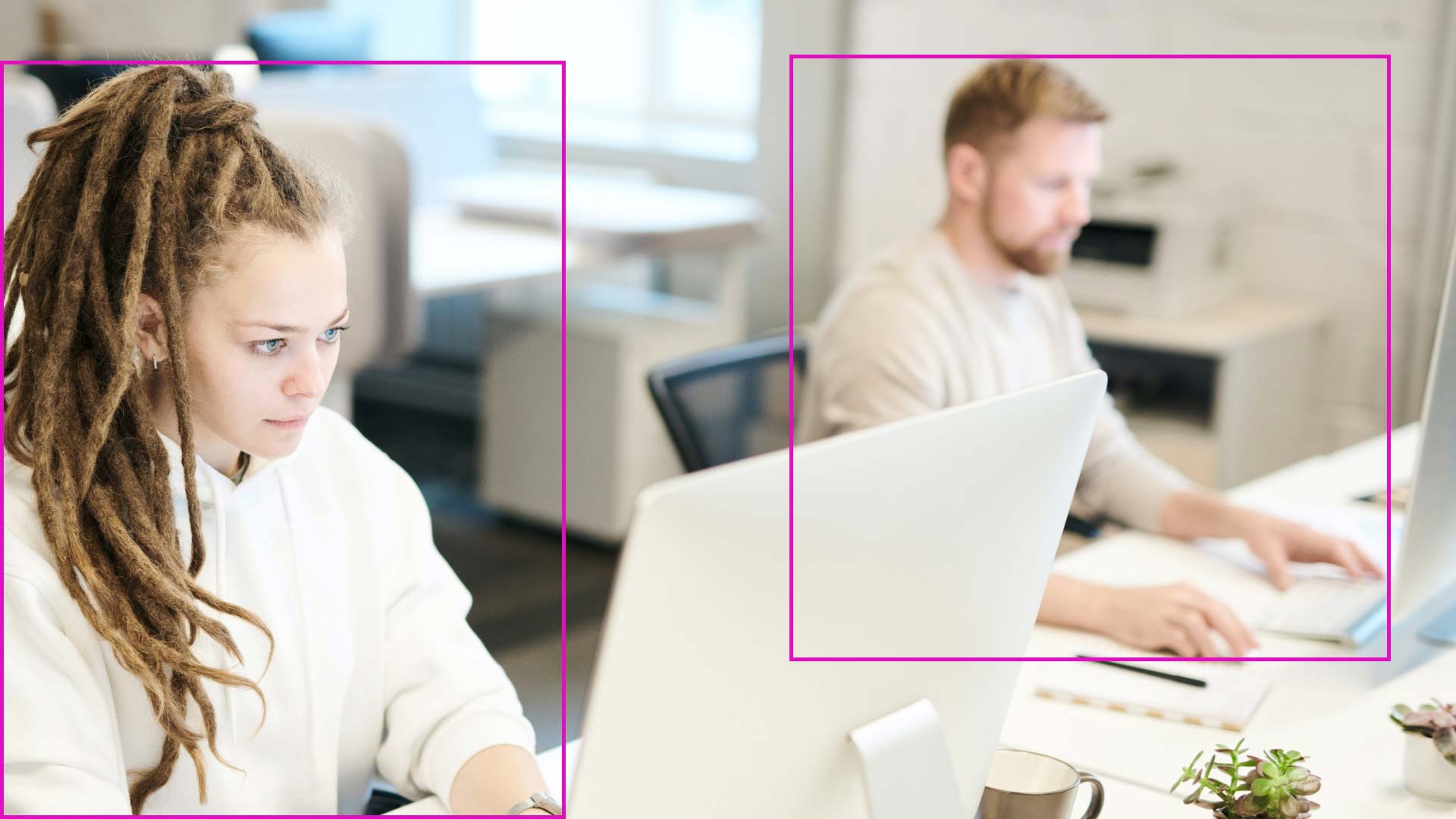

Annotators play a crucial role in the data annotation process. To ensure accurate and consistent annotations, it is essential to train and monitor the annotators throughout the project.

During the annotation training phase, annotators should receive comprehensive instructions on the annotation scheme and guidelines. This training helps them understand the task requirements and ensures consistency in the labeled data. Feedback, guidance, and support should be provided to address any doubts or conflicts that arise during the training process.

Once the training is complete, ongoing monitoring of the annotators is necessary to maintain data quality. Annotators should be regularly evaluated for their adherence to the annotation scheme and instructions. This monitoring helps identify and rectify any errors, inconsistencies, or biases in the annotations.

Inter-annotator agreement is a metric that measures the level of agreement between multiple annotators for the same annotations. It is an important measure of annotation consistency and can be used to identify areas where further training or clarification may be required. Additionally, monitoring annotation speed can provide insights into annotator performance and efficiency.

Validate and Refine the Annotated Data

Annotated data plays a critical role in the development and evaluation of NLP models. However, before using the annotated data for training or testing the models, it is important to validate and refine it to ensure accuracy and reliability.

During the validation process, errors, inconsistencies, biases, and outliers in the annotated data should be identified and corrected. This helps to improve the quality and integrity of the data, ensuring that the models are trained on reliable and consistent information. Validation can be performed using various techniques, such as data visualization and statistical analysis, to identify patterns or anomalies in the annotations.

To aid in the validation process, tools like SpaCy and NLTK can be utilized. SpaCy and NLTK are popular libraries for natural language processing and can provide valuable insights into the annotated data. These tools allow for in-depth analysis and visualization of the data, enabling data scientists to gain a better understanding of the annotations and identify any potential issues.

Once the data has been validated, the refinement process begins. This involves making necessary adjustments, enhancements, or improvements to the annotated data to ensure its quality and relevance. Data refinement can include tasks like standardization, normalization, or further enrichment of the annotations to align with the desired outcomes of the NLP models.

Overall, validating and refining annotated data are crucial steps in the data annotation process. By thoroughly examining the annotations, identifying and rectifying errors and biases, and utilizing tools like SpaCy and NLTK, data scientists can ensure the accuracy and reliability of the annotated data, thereby improving the performance and applicability of the NLP models.

Iterate and Evaluate the Annotation Process

The annotation process in data annotation for NLP is an iterative one, requiring regular evaluation to ensure optimal results. Monitoring the impact of the annotation process on model performance and gathering feedback from stakeholders is crucial for continuous improvement. Through careful evaluation and optimization, businesses can achieve their annotation objectives and enhance the overall performance of NLP models.

During the annotation process, it is essential to monitor the results and measure the impact on model performance. This allows businesses to assess the effectiveness of the annotation scheme and identify areas for improvement. By tracking the performance metrics of the annotated data, such as accuracy and precision, businesses can gain insights into the quality of the annotations and make necessary adjustments.

Feedback from annotators and other stakeholders is invaluable for refining the annotation process. By actively seeking feedback, businesses can identify pain points, clarify ambiguities, and address any challenges faced during the annotation process. This feedback loop helps optimize the annotation scheme, tools, annotators' performance, and the data itself to align with the specific business objectives.

Documenting changes and versions throughout the annotation process is vital for future reference and traceability. This documentation ensures transparency and facilitates reproducibility, making it easier to revisit previous iterations of the annotation process if needed. Additionally, it allows businesses to analyze the effectiveness of different approaches and make informed decisions for future annotation projects.

Optimization of the annotation scheme, tools, annotators, and data should be an ongoing process based on feedback and suggestions. By analyzing the feedback received and identifying areas of improvement, businesses can refine their annotation process to achieve better results and meet their business objectives effectively.

Considerations for Data Annotation

When it comes to data annotation, there are several important considerations to keep in mind. These considerations can help streamline the annotation process, optimize the use of resources, and ensure the quality and accuracy of the annotations.

User-Friendly Annotation Tools

Choosing the right annotation tools is crucial for efficient and effective data annotation. User-friendly tools such as Keylabs can greatly enhance the annotation workflow. The platform provides intuitive interfaces, automation features like pre-labeling, and support for various annotation types. By using user-friendly annotation tools, annotators can work more efficiently and produce high-quality annotations.

Incorporating Large Language Models (LLMs)

Large Language Models (LLMs) have revolutionized the field of natural language processing. These models have been trained on vast amounts of text data, enabling them to generate high-quality annotations automatically. By incorporating LLMs into the annotation process, annotators can leverage their capabilities to speed up annotation tasks and improve annotation accuracy. This integration can be particularly beneficial when dealing with large volumes of data.

Utilizing Zero-Shot NER Annotation Pipeline

The zero-shot Named Entity Recognition (NER) annotation pipeline is another powerful tool in the data annotation arsenal. This pipeline allows annotators to label entities that were not present in the training data. By leveraging the knowledge and capabilities of the model, the zero-shot NER annotation pipeline can accurately predict and annotate unseen entities. This approach saves time and effort by reducing the need for manual intervention in every annotation task.

Ensuring Trusted Annotations through Training and Verification

To ensure the quality and reliability of annotations, proper training and verification processes must be implemented. Annotators should undergo thorough training on the annotation guidelines, annotation scheme, and specific task requirements. Regular feedback, guidance, and support should be provided to address any uncertainties or challenges. Additionally, incorporating a verification step where annotations are reviewed and validated by experienced annotators can further enhance the trustworthiness of the annotations.

By considering these key factors - using user-friendly annotation tools, incorporating LLMs for automated annotation, utilizing zero-shot NER annotation pipeline, and ensuring trusted annotations through proper training and verification - data annotation can be effectively optimized and yield high-quality results for NLP models.

Industry-Specific Data Annotation

Different industries have unique requirements when it comes to data annotation. Whether it's for healthcare applications, sentiment analysis and product recommendations in the retail sector, fraud detection in finance, autonomous vehicles in the automotive industry, or manufacturing and quality control in the industrial sector, customized data annotation plays a crucial role.

In the medical field, medical data annotation is used to categorize and label healthcare-related information. This enables the development of applications for patient diagnosis, treatment monitoring, and medical research.

Retail data annotation focuses on analyzing customer sentiments and preferences. By annotating data related to customer reviews, product descriptions, and social media interactions, businesses can gain valuable insights for targeted marketing campaigns and personalized recommendations.

Finance data annotation is essential for fraud detection and risk mitigation. Annotating data related to financial transactions, customer records, and suspicious activities helps build robust models that can identify potential fraud and ensure secure transactions.

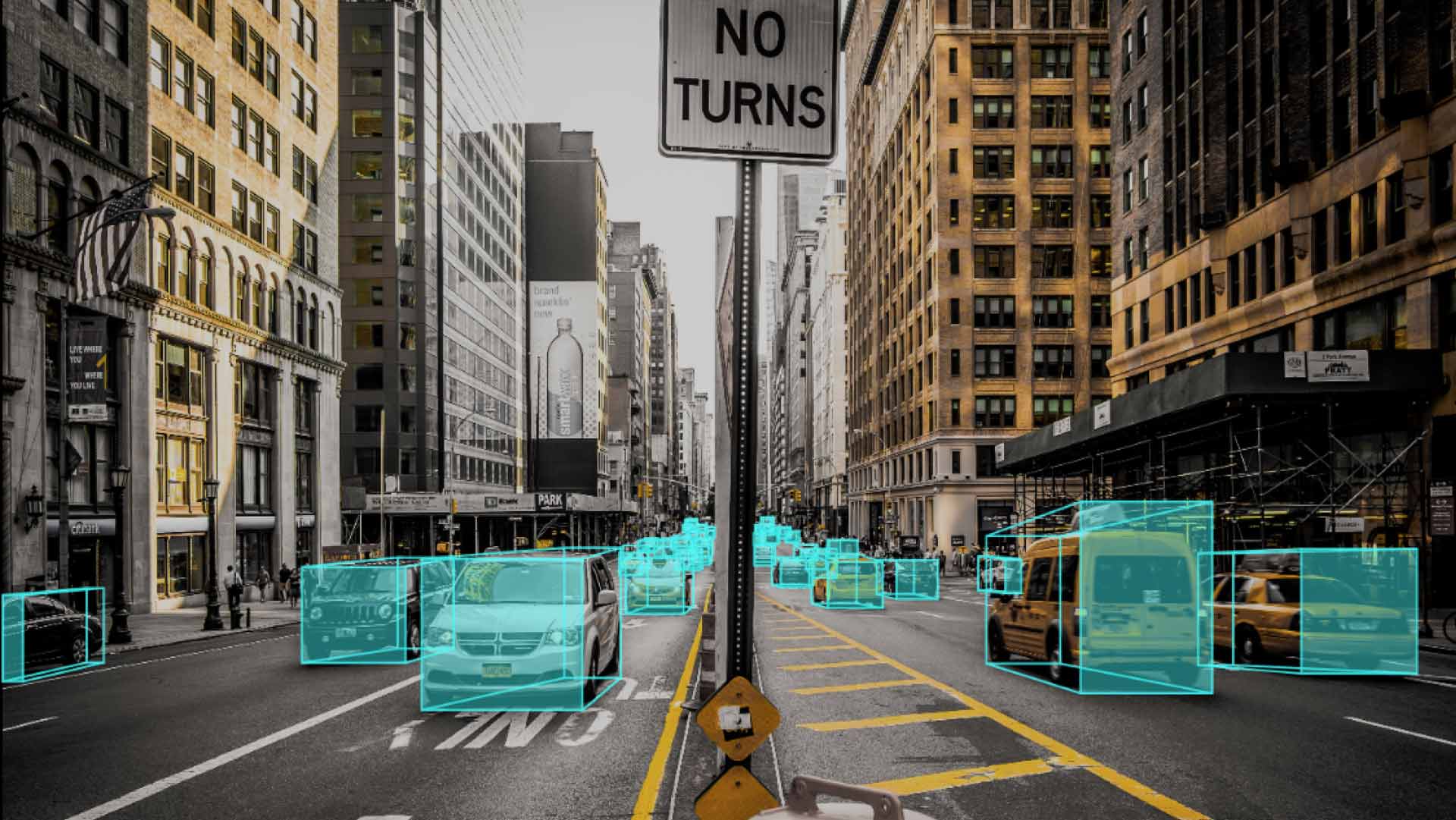

When it comes to the automotive industry, automotive data annotation is crucial for the development of autonomous vehicles. Annotating data related to object recognition, traffic signs, and road conditions helps train machine learning algorithms to make accurate decisions in real-world driving scenarios.

Industrial data annotation plays a vital role in manufacturing and quality control processes. By annotating data collected from sensors, cameras, and other devices, manufacturers can optimize production lines, monitor product quality, and identify potential defects.

Conclusion

Effective data annotation is essential for optimizing NLP models and achieving higher accuracy and performance. By implementing the right data annotation strategies, utilizing appropriate tools, training and monitoring annotators, validating and refining annotated data, iterating and evaluating the annotation process, considering industry-specific needs, and adhering to best practices, NLP models can be significantly enhanced.

Choosing the right annotation scheme is critical to ensure that data is labeled accurately and consistently. It is important to align the annotation scheme with the specific task and model requirements. Existing schemes from reputable sources like the Linguistic Data Consortium or the Universal Dependencies project can be utilized, or a custom scheme can be developed to meet specific needs.

Annotator training and monitoring play a vital role in ensuring consistent and high-quality annotations. Providing comprehensive training, clear guidelines, and continuous feedback can improve annotator performance. Monitoring inter-annotator agreement and annotation speed can help identify areas for improvement and enhance overall annotation quality.

FAQ

What is data annotation for NLP?

Data annotation for NLP involves labeling text data with relevant information to help NLP models learn and perform specific tasks.

Why is the annotation scheme important in data annotation?

The annotation scheme is crucial for labeling data according to the task and model, ensuring clarity, consistency, and comprehensiveness.

Are there existing annotation schemes that can be utilized?

Yes, existing annotation schemes from organizations like the Linguistic Data Consortium or the Universal Dependencies project can be used, or a custom scheme can be created.

How should annotators be trained and monitored?

Annotators should receive training on the annotation scheme and guidelines, and their performance should be monitored to ensure consistent and accurate annotations.

What should be done before using annotated data for training or testing a model?

Annotated data should be validated and refined to check for errors, inconsistencies, biases, and outliers. Tools like SpaCy or NLTK can assist in analysis and data enhancement.

How should the annotation process be evaluated?

Regular evaluation is essential. Monitoring results, measuring the impact on model performance, and gathering feedback are important for refining the annotation process.

Are there any considerations for data annotation?

Yes, considerations include utilizing user-friendly annotation tools, incorporating Large Language Models (LLMs) for automated annotation, and ensuring trusted annotations through training and verification.

What are some industry-specific data annotation requirements?

Different industries have specific needs. Medical data annotation is used for healthcare applications, retail data annotation for sentiment analysis and product recommendations, finance data annotation for fraud detection, automotive data annotation for autonomous vehicles, and industrial data annotation for manufacturing and quality control.

How does effective data annotation enhance NLP models?

By implementing the right annotation scheme, using appropriate tools, training and monitoring annotators, validating and refining the annotated data, iterating and evaluating the process, and considering industry-specific needs, NLP models can achieve higher accuracy and performance.