Implementing Real-Time Semantic Segmentation in Your Projects

Real-time semantic segmentation is a powerful technique in computer vision that allows for the accurate and fast segmentation of objects in images and videos. By implementing real-time semantic segmentation algorithms in your projects, you can enhance your machine learning and computer vision capabilities. In this article, we will explore the different techniques and tools available for implementing real-time semantic segmentation and how they can be applied to various applications.

Key Takeaways:

- Real-time semantic segmentation enables accurate and fast object segmentation in images and videos.

- Implementing real-time semantic segmentation algorithms enhances machine learning and computer vision capabilities.

- Various techniques and tools are available for implementing real-time semantic segmentation in different applications.

- Real-time semantic segmentation can be applied to tasks such as object recognition, image analysis, and video processing.

- Deep learning methods play a crucial role in achieving high-quality real-time semantic segmentation results.

Understanding Image Segmentation in Computer Vision Applications

Image segmentation plays a crucial role in the field of computer vision, enabling the categorization and analysis of objects within digital images. By segmenting the contents of an image into different categories, we gain a deeper understanding of the objects present and can perform subsequent analysis more accurately and efficiently.

Diverse applications benefit from image segmentation, ranging from foreground-background separation to the analysis of medical images, background editing, vision in self-driving cars, and the examination of satellite images. Let's explore how image segmentation enhances these specific fields and why it is vital in each context.

Foreground-Background Separation

Image segmentation facilitates the separation of foreground objects from the background, allowing for a more focused analysis of the elements of interest. This application is particularly useful in various areas, such as video editing, where it helps isolate subjects and enables the addition or removal of backgrounds to enhance visual compositions.

Analysis of Medical Images

In the medical field, image segmentation plays a crucial role in understanding and diagnosing diseases. It enables the identification and isolation of specific structures or regions of interest within medical images, such as organs, tumors, or blood vessels. Accurate segmentation aids physicians in making accurate diagnoses and planning effective treatment strategies.

"Accurate segmentation of medical images using computer vision techniques greatly improves diagnostic precision and enhances patient care."

Background Editing

Image segmentation is employed in various image editing applications to modify or replace the background of an image while preserving the foreground subjects. This technique finds extensive use in industries such as product photography, graphic design, and e-commerce, where it enables the creation of visually appealing and contextually relevant compositions.

Vision in Self-Driving Cars

In the realm of autonomous vehicles, image segmentation is critical for interpreting the surrounding environment and identifying objects of interest. By segmenting the different elements within a scene, self-driving cars can accurately perceive and navigate through their surroundings, ensuring safety and efficient operation.

Analysis of Satellite Images

Image segmentation is instrumental in extracting valuable insights from satellite imagery. Whether it's assessing the health of crops, monitoring urban development, or identifying geological formations, segmentation allows us to separate and analyze different regions, enabling informed decision-making and deeper understanding of the observed data.

Understanding image segmentation is fundamental to unlocking the full potential of computer vision applications. By accurately delineating and categorizing objects within images, we can extract valuable information, improve decision-making, and enhance a wide range of industries, from healthcare to autonomous vehicles. In the following sections, we will explore various tools and techniques for implementing image segmentation and how they can elevate your computer vision projects.

Introducing PixelLib Library for Real-Time Image and Video Segmentation

In the field of computer vision, real-time object segmentation in images and videos plays a crucial role in various applications. The PixelLib Library is a powerful tool that simplifies the implementation of real-time object segmentation with just a few lines of Python code. It offers a range of features, including semantic and instance segmentation of objects, custom training of segmentation models, background editing, and object extraction.

One of the key advantages of PixelLib is its support for both Linux and Windows operating systems. By integrating with the PyTorch backend and utilizing the PointRend segmentation architecture, PixelLib delivers faster and more accurate segmentation results. This flexibility allows developers to seamlessly incorporate real-time object segmentation into their projects, regardless of the operating system they are using.

Key Features of PixelLib Library

- Object Segmentation: PixelLib enables the accurate and efficient segmentation of objects in images and videos.

- Semantic and Instance Segmentation: It supports both semantic and instance segmentation, allowing for a detailed understanding and analysis of objects present in an image or video.

- Custom Training: Developers have the flexibility to train and fine-tune segmentation models according to their specific requirements and datasets.

- Background Editing: PixelLib offers the capability to edit the background of segmented objects, providing additional control and creativity in image and video processing tasks.

- Object Extraction: The library provides functionality to extract segmented objects, enabling further analysis and utilization of the segmented data.

PyTorch and PointRend Integration

PixelLib leverages the power of PyTorch, a popular deep learning framework, as its backend. This integration enables efficient computation and optimization of segmentation models, resulting in faster and more accurate object segmentation.

Additionally, PixelLib utilizes the PointRend segmentation architecture, which introduces a novel approach to semantic segmentation. By focusing on utilizing fewer computational resources, PointRend achieves exceptional segmentation accuracy, making it an ideal choice for real-time computer vision applications.

Table: Comparing PixelLib with other Libraries

| Features | PixelLib | Library A | Library B |

|---|---|---|---|

| Object Segmentation | ✓ | ✓ | ✗ |

| Semantic and Instance Segmentation | ✓ | ✗ | ✓ |

| Custom Training | ✓ | ✗ | ✓ |

| Background Editing | ✓ | ✗ | ✗ |

| Object Extraction | ✓ | ✓ | ✗ |

As seen in the comparison table, PixelLib outperforms other libraries in terms of its comprehensive feature set for object segmentation.

Implementing real-time object segmentation in your projects has never been easier. With the PixelLib Library, developers can harness the power of semantic and instance segmentation, custom training, background editing, and object extraction. The integration with PyTorch and the utilization of the PointRend segmentation architecture further enhance the speed and accuracy of object segmentation. Whether you are working on Linux or Windows, PixelLib empowers you to unlock the full potential of real-time image and video segmentation.

Comparing Mask R-CNN and PointRend for Real-Time Image Segmentation

When it comes to real-time image segmentation, two prominent architectures that often come into consideration are Mask R-CNN and PointRend. While both architectures have their merits, they differ in terms of accuracy and speed performance, making each suitable for different real-time computer vision applications.

Mask R-CNN is a widely recognized architecture that has proven to be effective for image segmentation tasks. It offers a good balance between accuracy and speed, making it a popular choice for various computer vision applications. However, when it comes to real-time scenarios, Mask R-CNN may face challenges in maintaining high inference speed without sacrificing accuracy.

On the other hand, PointRend is an architecture that provides more accurate segmentation results with high inference speed. It focuses on refining the output of object boundaries and capturing fine-grained details, resulting in improved segmentation outputs. PointRend is specifically designed for real-time computer vision applications, making it an excellent choice when accuracy is of utmost importance.

To provide a better understanding of the differences between Mask R-CNN and PointRend, the following table summarizes their key features in terms of segmentation results, accuracy, and speed performance:

| Architecture | Segmentation Results | Accuracy | Speed Performance |

|---|---|---|---|

| Mask R-CNN | Good | High | Moderate |

| PointRend | Better | High | Fast |

As seen in the table, Mask R-CNN delivers good segmentation results and maintains a high accuracy level. However, in terms of speed performance, it falls into the moderate range. On the other hand, PointRend provides better segmentation results while maintaining high accuracy and achieving faster inference speeds.

Considering the real-time nature of many computer vision applications, PointRend's combination of high accuracy and fast performance makes it a preferable choice over Mask R-CNN for real-time image segmentation tasks.

Installing and Configuring PixelLib for Real-Time Semantic Segmentation

To implement real-time semantic segmentation using PixelLib, proper installation and configuration of the library are required. Follow the steps below:

- Ensure you have a compatible version of Python installed on your system. PixelLib supports Python version 3.7 and above. If you don't have Python installed or your version is incompatible, download and install the appropriate version from the official Python website.

- Next, install a compatible version of Pytorch as specified by PixelLib. Pytorch is a deep learning framework that serves as the backend for PixelLib's segmentation models.

- Install the Pycocotools library, which is required for working with datasets in the COCO format. Pycocotools provides tools for parsing and manipulating the COCO dataset, a widely used benchmark for object detection and segmentation tasks. Make sure to update Pycocotools to the latest version to ensure compatibility with PixelLib.

- If you already have PixelLib installed on your system, you can upgrade it to the latest version using the following command:

!pip install pixellib --upgradeOnce you have completed these installation and configuration steps, you will be ready to utilize PixelLib for real-time semantic segmentation in your projects.

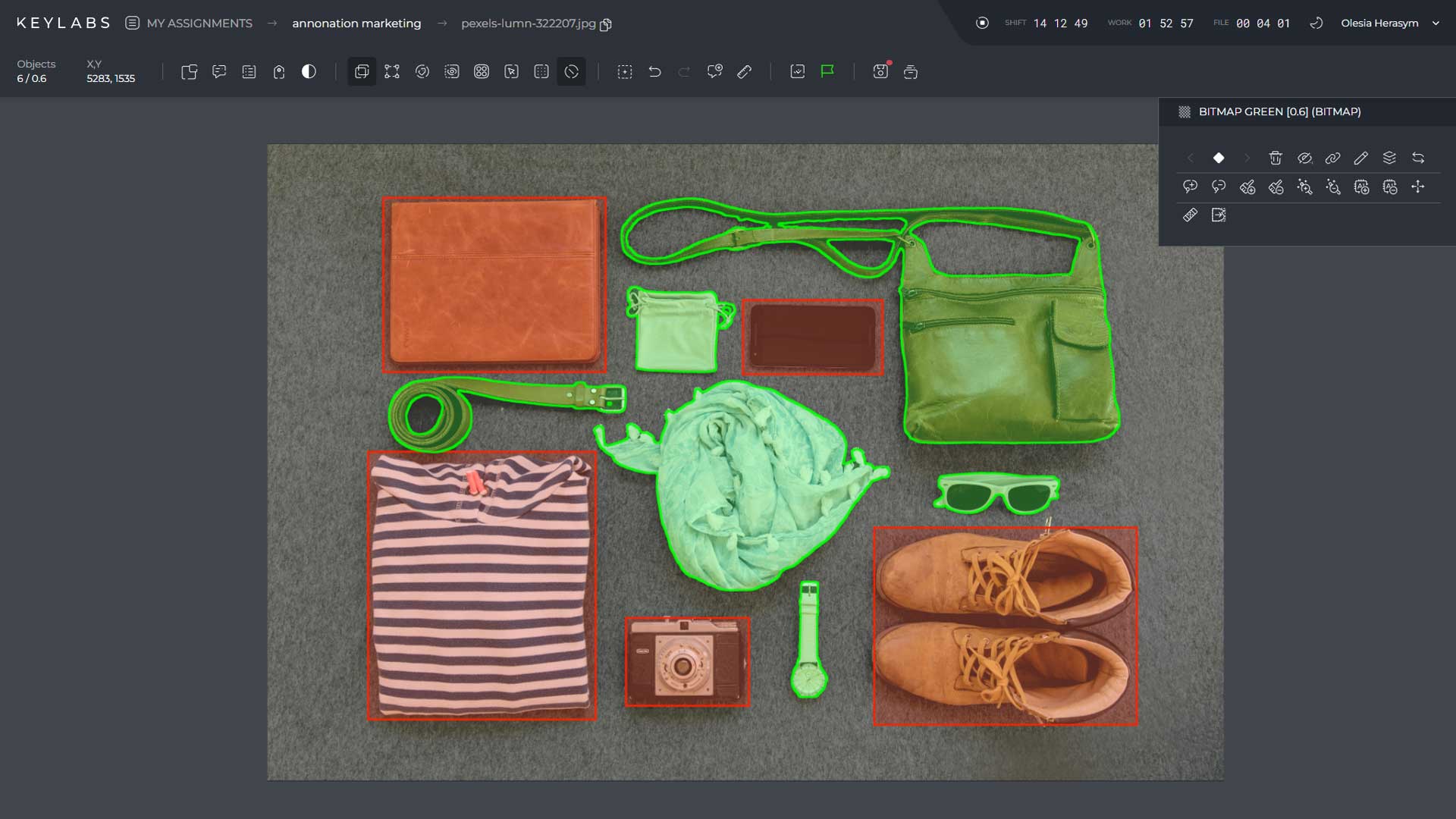

Performing Image Segmentation with PixelLib in Python

Performing image segmentation with PixelLib is a straightforward process that involves using a few lines of Python code. By leveraging the powerful capabilities of the PixelLib library, you can achieve accurate and efficient segmentation of objects in your images. Let's explore the steps involved in performing image segmentation using PixelLib.

Segmentation Results and Object Details

The segmentation results returned by the segmentImage function provide valuable information about the segmented objects in the image. The results include:

- Object coordinates: Bounding box coordinates of each segmented object

- Class IDs: Unique identifiers for each detected object class

- Class names: Names of the detected object classes

- Object counts: Total count of segmented objects

- Scores: Confidence scores for each segmented object

- Masks: Mask values representing the segmented objects

These segmentation results enable you to extract valuable insights and details about the objects in your images for further analysis and processing.

Below is an example of the Python code snippet used for performing image segmentation with PixelLib:

from pixellib.instance import instanceSegmentation

segmentation_model = instanceSegmentation()

segmentation_model.load_model("pointrend_mask_rcnn.h5")

segmentation_results = segmentation_model.segmentImage("path_to_image.jpg", show_bboxes=True, output_image_name="segmented_image.jpg")

With just a few lines of code, you can leverage the power of the PixelLib library and the PointRend model to perform image segmentation with ease.

| Object | Coordinates | Class ID | Class Name | Score | Mask |

|---|---|---|---|---|---|

| Object 1 | (x1, y1, x2, y2) | 1 | Person | 0.986 | |

| Object 2 | (x3, y3, x4, y4) | 2 | Car | 0.934 | |

| Object 3 | (x5, y5, x6, y6) | 3 | Dog | 0.897 |

Understanding Segmentation Results and Extracting Objects with PixelLib

The segmentation results returned by the PixelLib library provide valuable information about the segmented objects in an image. These results include various parameters that can be used to analyze and utilize the segmentation data.

The coordinates of the segmented objects represent the bounding boxes that enclose each object. This information allows for precise localization and positioning of the objects within the image.

The class IDs and class names associated with each object indicate the category or type of the objects. This classification enables a deeper understanding of the segmented objects and facilitates further analysis based on their specific attributes.

The object counts provide the total number of segmented objects in the image. This count offers insights into the complexity and richness of the segmented scene, which can aid in evaluating the effectiveness of the segmentation process.

The scores associated with each segmentation represent the confidence level or probability of accurately classifying an object. These scores can be used to filter out less reliable segmentations or prioritize objects with higher confidence scores for subsequent analysis.

The masks generated by PixelLib define the mask values for each object, which enable pixel-level segmentation. These binary masks represent the pixel-wise areas corresponding to each object, allowing for more precise and granular analysis of the objects and their boundaries.

Additionally, PixelLib provides the capability to extract the segmented objects from the original image. This feature allows for easy isolation and separation of the objects, enabling more focused analysis or utilization of the extracted objects for downstream tasks.

The segmentation results, including object coordinates, class IDs, class names, object counts, scores, masks, and extracted objects, provide a comprehensive and detailed understanding of the segmented image. This facilitates advanced analysis, object manipulation, and further exploration of the segmented data.

To better illustrate the significance of segmentation results, consider the following example:

| Object ID | Class | Coordinates | Score | Mask |

|---|---|---|---|---|

| 1 | Car | (xmin: 400, ymin: 200, xmax: 600, ymax: 400) | 0.92 | |

| 2 | Person | (xmin: 150, ymin: 300, xmax: 250, ymax: 500) | 0.86 | |

| 3 | Dog | (xmin: 700, ymin: 150, xmax: 800, ymax: 250) | 0.78 |

In the example above, the segmentation results for an image containing a car, a person, and a dog are presented. The object coordinates indicate the position of each object within the image, while the class IDs and class names categorize the objects into their respective classes. The scores demonstrate the confidence level of each segmentation, with higher scores indicating more reliable segmentations. The masks visualized in the images provide a clear representation of the object boundaries.

By extracting these segmented objects, further analysis or utilization can be performed, such as object recognition, object tracking, or integrating the segmented objects into other applications.

Deep Learning Methods for Real-Time Semantic Segmentation

Deep learning has revolutionized the field of computer vision, providing a range of methods for real-time semantic segmentation. In this section, we will explore some popular deep learning architectures that have proven to be effective in achieving accurate and real-time segmentation results.

Fully Convolutional Network (FCN)

The Fully Convolutional Network (FCN) is a deep learning architecture specifically designed for semantic segmentation tasks. Unlike traditional convolutional neural networks (CNNs) used for image classification, FCNs preserve spatial information by replacing fully connected layers with convolutional layers. This enables FCNs to output segmentation maps at the same resolution as the input image, allowing for pixel-wise classification and real-time inference.

U-net

U-net is a popular architecture commonly used for biomedical image segmentation tasks. It is known for its U-shaped design, with a contracting path that captures high-level features and an expanding path that recovers the spatial resolution of the segmentation map. U-net incorporates skip connections between corresponding layers in the contracting and expanding paths, enabling the model to capture both local and global features, resulting in accurate and detailed segmentations.

Pyramid Scene Parsing Network (PSPNet)

The Pyramid Scene Parsing Network (PSPNet) is an architecture that utilizes a pyramid pooling module to capture context information at multiple scales. By aggregating features from different pyramid levels, PSPNet effectively captures global context while maintaining high-resolution details. This leads to precise and accurate segmentations, making PSPNet suitable for real-time semantic segmentation in various applications.

DeepLab

DeepLab is a family of deep learning architectures that have achieved state-of-the-art performance in semantic image segmentation. The main innovation of DeepLab is the use of atrous (dilated) convolutions, which enable the network to capture multi-scale context information without downsampling the feature maps. By combining atrous convolutions with other strategies like spatial pyramid pooling and dilated convolutional networks, DeepLab models achieve high accuracy and real-time performance.

These deep learning methods, including FCN, U-net, PSPNet, and DeepLab, have significantly advanced the field of real-time semantic segmentation. By leveraging the power of convolutional neural networks, these architectures provide accurate and efficient solutions for segmenting objects in images and videos.

Comparison of Deep Learning Methods for Real-Time Semantic Segmentation

To further understand the differences and capabilities of these deep learning methods, let's compare them in terms of various metrics such as computational efficiency, segmentation accuracy, and inference speed. The following table provides a comprehensive overview of these factors:

| Method | Computational Efficiency | Segmentation Accuracy | Inference Speed |

|---|---|---|---|

| FCN | Medium | High | Fast |

| U-net | Medium | High | Fast |

| PSPNet | High | Very High | Medium |

| DeepLab | High | Very High | Medium |

From the table, we can see that PSPNet and DeepLab achieve higher segmentation accuracy at the cost of increased computational complexity. FCN and U-net, on the other hand, provide a balance between accuracy and computational efficiency, making them suitable for real-time semantic segmentation in various applications.

"The use of deep learning methods for real-time semantic segmentation has significantly improved the accuracy and efficiency of object segmentation in computer vision applications."

By utilizing architectures such as FCN, U-net, PSPNet, and DeepLab, developers and researchers can achieve high-quality semantic segmentation results in real-time, bringing advancements to a wide range of fields, including autonomous driving, medical imaging, and natural scene understanding.

Conclusion

Real-time semantic segmentation is a game-changing technique in the field of computer vision that allows for fast and accurate object segmentation in images and videos. By integrating real-time semantic segmentation algorithms and tools into your projects, you can elevate your computer vision and machine learning capabilities.

The PixelLib library offers a user-friendly and efficient solution for real-time semantic segmentation, providing a range of features and functionalities. With deep learning methods such as FCN, U-net, PSPNet, and DeepLab, you can achieve high-quality segmentation results in real-time, enabling you to extract valuable insights from visual data.

By harnessing the power of real-time semantic segmentation, you can unlock new opportunities and applications in fields like autonomous vehicles, medical imaging, security systems, and more. With the rapid advancements in computer vision and machine learning, the future of object segmentation looks promising, and real-time semantic segmentation will continue to play a vital role in driving innovation in these domains.

FAQ

What is real-time semantic segmentation?

Real-time semantic segmentation is a technique in computer vision that allows for the accurate and fast segmentation of objects in images and videos in real-time.

What are some applications of image segmentation in computer vision?

Image segmentation is commonly used for tasks such as foreground-background separation, analysis of medical images, background editing, vision in self-driving cars, and analysis of satellite images.

What is PixelLib Library and what features does it provide for real-time segmentation?

PixelLib Library is a powerful tool that enables easy integration of real-time object segmentation in images and videos. It supports features such as semantic and instance segmentation of objects, custom training of segmentation models, background editing, and object extraction.

How does PointRend compare to Mask R-CNN for real-time image segmentation?

PointRend offers more accurate segmentation results with high inference speed compared to Mask R-CNN, making it a better choice for real-time computer vision applications.

How do I install and configure PixelLib for real-time semantic segmentation?

To install and configure PixelLib, you need to download and install a compatible version of Python, install a compatible version of Pytorch, install the Pycocotools library, and ensure it is up to date.

How can I perform image segmentation with PixelLib in Python?

To perform image segmentation with PixelLib, you need to import the necessary module and class, create an instance of the class, load the PointRend model, and use the segmentImage function, specifying the image path and optional parameters.

What information does the segmentation results returned by PixelLib provide?

The segmentation results returned by PixelLib include the coordinates, class IDs, class names, object counts, scores, and masks of the segmented objects, providing valuable information for object analysis.

What are some popular deep learning methods for real-time semantic segmentation?

Some popular deep learning methods for real-time semantic segmentation include Fully Convolutional Network (FCN), U-net, Pyramid Scene Parsing Network (PSPNet), and DeepLab.

How can real-time semantic segmentation enhance computer vision and machine learning projects?

Real-time semantic segmentation allows for accurate and fast object segmentation, enhancing the capabilities of computer vision and machine learning projects by providing valuable insights and improving subsequent analysis tasks.