Ensuring Data Quality With Outsourcing

“Poor data quality is enemy number one to the widespread, profitable use of machine learning.”

– Tomas C. Redman, Harvard Business Review, If Your Data Is Bad, Your Machine Learning Tools Are Useless

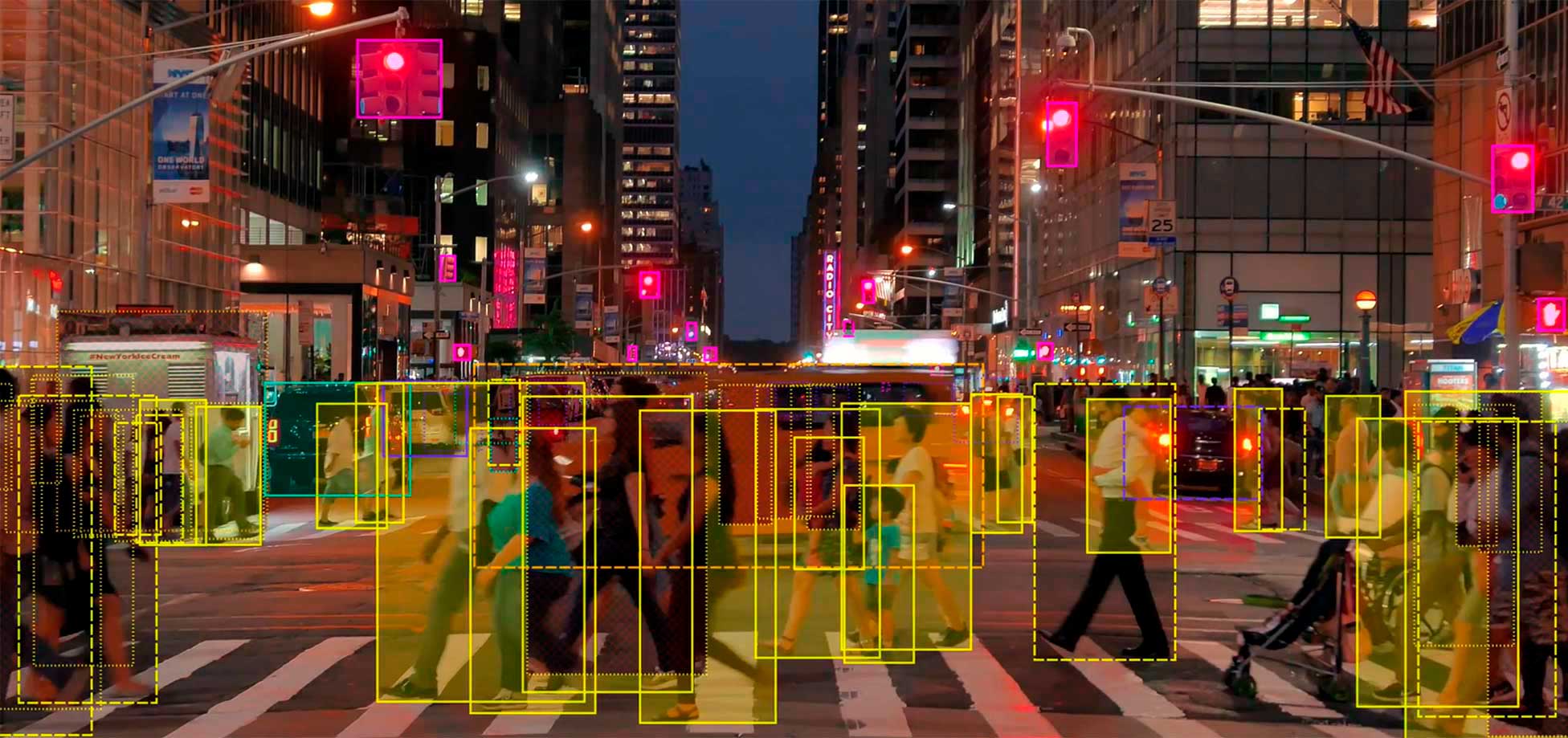

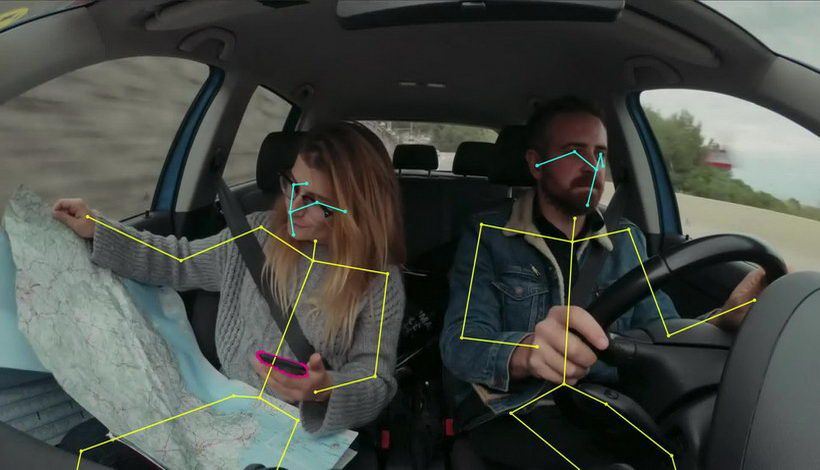

The most exciting, innovative machine learning projects in development today are reliant on access to quality data. Inaccuracies in training datasets inevitably lead to models that are not capable of achieving their stated goals in the real world. At the core of successful computer vision based AI models is unbiased, diverse training data, and that is annotated accurately.

However, the process of acquiring and labeling image and video data, and then subjecting that data to quality assurance checks can be costly and time consuming for companies of all sizes.

Professional annotation providers are capable of fulfilling the pressing need for high quality datasets and removing this burden from innovators. This blog will show how Keymakr’s processes and expertise are safeguarding data quality.

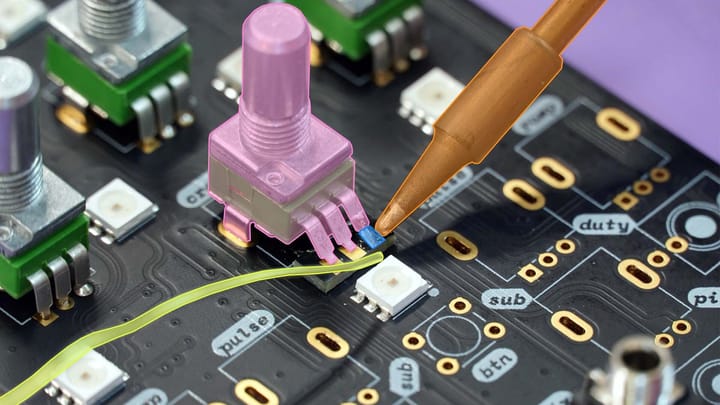

Video annotation | Keymakr

Quality control and verification

Rigorous quality control processes are, of course, crucial for ensuring that training datasets are fit for purpose. Instituting these systems correctly in-house can be a difficult challenge for many companies. Staffing QA teams and establishing novel verification procedures can be a distraction from the core aims of a project, and divert the valuable time of researchers and management.

Image and video data providers are able to leverage their experience with dataset creation as well as their proprietary technology to guarantee a frictionless QA process. Keymakr utilises three layers of human quality verification, followed by a final automated quality check. By making use of multiple instances of quality verification it is possible to create mistake free, annotated training data.

Data diversity

A lack of diversity in training data can lead to models that are incapable of interpreting the complexity of the real world. Training data for automated vehicles that only contains images from one city, in daylight, will result in a final model that is ill-equipped to perform in a different context or different light conditions.

However, it can be a challenge for machine learning operations to collect the range of diverse data that their projects need. Some images/video may not be accessible through the public web, and sometimes bespoke data has to be created to fulfill a specific need.

Outsourcing can provide a data collection and creation solution. Professional annotation services, like Keymakr, make use of data scraping tools and in-house studios to find and produce images and video that cutting edge models require.

Data volume

An important aspect of data quality is having access to precisely labeled data at sufficient volumes. Committing to this amount of dataset creation in-house means establishing a dedicated annotation department, and taking on all of the unavoidable hiring, management, and staffing cost burdens.

Increasing the amount of data will mean additional costs, and when data needs are lower, significant investment will have already been committed to an underutilised workforce.

Outsourcing allows for quick scaling up of quality data as and when development demands. Keymakr offers flexibility to computer vision projects that need to rapidly expand whilst maintaining a consistent level of quality and precision.

Expertise and established processes guarantee quality data

Maintaining a steady flow of accurate data is of paramount importance for the success of any computer vision project. Outsourcing relieves the pressure on developers by handing over QA processes to experienced dataset creation collaborators.

Keymakr makes use of proprietary annotation tools to create image and video training data that is precise and affordable.